Monthly Archives: July 2011

Exceptional DBA Awards 2011

Time has come for the annual Exceptional DBA Awards contest, sponsored by Red Gate and judged by four really exceptional DBAs:

- Steve Jones (blog|twitter)

- Rodney Landrum (blog|twitter)

- Brad McGehee (blog|twitter)

- Brent Ozar (blog|twitter)

The judges picked their finalists and it would really be hard to choose the winner if I didn’t happen to know one of them.

I won’t talk around it: please vote for Jeff Moden!

I don’t know the other three finalists and I am sure that they really are very good DBAs, probably exceptional DBAs, otherwise they would not have made it to the final showdown. But I have no doubt that Jeff is the one to vote.

There is something that goes beyond being exceptional. I can’t give it a name, but I will try to explain it.

Some years ago, I was working primarily as a developer at a big shoe company and I was one of those “Accidental” DBAs lurking on the SQL Server Central forums struggling to expand their knowledge. I already had a long experience in database development, but, at that time, I also needed to start learning how to take care of my databases. Whenever I had an issue, SQL Server Central had a thread with an answer for me.

One day, I stumbled upon a question and, surprisingly enough, I happened to know the answer. That day, when I hit the “reply” button, I had no idea of the great journey that was ahead of me.

SQL Server Central people are exceptional and the forums are totally addictive. Long story short, I became one of the “regulars”. I could not stay away from the forums and checking the e-mail notifications became part of my morning tasks.

Among the other regulars, there was one folk with a funny signature, a sort of manifesto of the anti-RBAR party. “RBAR”: a made-up word that probably very few people knew at the time, which now is just the right word to say it when you do it “Row By Agonizing Row”!

That guy with the funny signature was one of the most active members and it looked like he spent the whole night posting on the forums (and he probably did). His replies were always smart, spot-on and humorous.

He also had published some articles where he preached avoiding cursors like the plague and replacing them with the T-SQL Swiss army knife “par excellence”: the Tally table.

Needless to say, the folk’s name is Jeff Moden.

His articles are always enlightening and thorough, shipped with the complete code and solid performance demonstration. Jeff’s writing style is unique and engaging: you would recognize one of his articles even if he published under a pseudonym (which he did, actually. Remember Phil McCracken?).

His articles are always enlightening and thorough, shipped with the complete code and solid performance demonstration. Jeff’s writing style is unique and engaging: you would recognize one of his articles even if he published under a pseudonym (which he did, actually. Remember Phil McCracken?).

I have never met Jeff in person, but I consider him a good friend. He also helped me write my first article for SSC: his hair must have turned white when he read the first draft and his beard must have grown an inch when he saw my poor English. Nonetheless, his kind words, encouragement and suggestions helped me accomplish that article and I consider him one of the main culprits if now I’m not just a thread-aholic, but I also turned into a blog-aholic.

Jeff deserves your vote, because he is an exceptional person and an exceptional DBA. People like him are more than exceptional: they’re one of a kind. I don’t know the other three finalists, but I want to believe they’re not as exceptional as Jeff, otherwise I would have to feel even more humbled compared to them.

Go vote for Jeff, and, even more important, read his articles. You will find them enjoyable and inspiring.

Table-level CHECK constraints

EDITED 2011-08-05: This post is NOT about the “correct” way to implement table-level check constraints. If that is what you’re looking for, see this post instead.

Today on SQL Server Central I stumbled upon an apparently simple question on CHECK constraints. The question can be found here.

The OP wanted to know how to implement a CHECK constraint based on data from another table. In particular, he wanted to prohibit modifications to records in a detail table based on a datetime column on the master table. A simple way to achieve it is to use a trigger, but he was concerned about performance and wanted to implement it with a CHECK constraint.

Let’s see how this can be done. First of all, we will need a couple of test tables, with some sample data:

USE tempdb;

GO

-- Create master table

CREATE TABLE masterTable (

id int identity(1,1) PRIMARY KEY,

dateColumn datetime

)

GO

-- Create referenced table

CREATE TABLE detailTable (

id int identity(1,1) PRIMARY KEY,

master_id int FOREIGN KEY REFERENCES masterTable(id),

valueColumn varchar(50)

)

GO

-- Insert sample data

INSERT INTO masterTable(dateColumn) VALUES(GETDATE())

INSERT INTO masterTable(dateColumn) VALUES(DATEADD(day,-1,GETDATE()))

INSERT INTO masterTable(dateColumn) VALUES(DATEADD(day,-2,GETDATE()))

SELECT * FROM masterTable

-- Insert sample data in referenced table

INSERT INTO detailTable(master_id, valueColumn) VALUES (1,'Value for id 1')

INSERT INTO detailTable(master_id, valueColumn) VALUES (2,'Value for id 2')

INSERT INTO detailTable(master_id, valueColumn) VALUES (3,'Value for id 3')

SELECT * FROM detailTable

Now we need to create the CHECK constraint. The only way to code it to validate data against a different table is to use a scalar UDF, which, to answer the original question, makes it a poor choice from a performance standpoint.

-- Create a scalar UDF: you will need this in the CHECK constraint

CREATE FUNCTION getMasterDate(@id int)

RETURNS datetime

AS

BEGIN

RETURN ISNULL((SELECT dateColumn FROM masterTable WHERE id = @id),'30110101')

END

GO

-- Add the constraint WITH NOCHECK: existing rows are not affected

ALTER TABLE detailTable WITH NOCHECK ADD CONSTRAINT chk_date

CHECK (DATEADD(day,-1,GETDATE()) > dbo.getMasterDate(master_id))

No surprises: the constraints gets added to the table and SQL Server does not complain about the existing rows that don’t pass the check, since we decided to use the NOCHECK switch.

Now, with the constraint in place, we should be unable to modify the data in a way that violates the constraint:

UPDATE detailTable SET valueColumn = 'New ' + valueColumn

Surprise! Some rows conflict with the CHECK constraint, but no complaints from SQL Server, because the constraint is attached to a single column (master_id), and we left that column untouched.

In fact, if we query sys.check_constraints, we can see that this is a column-scoped constraint:

SELECT parent_column_id, definition, is_not_trusted

FROM sys.check_constraints

WHERE parent_object_id = OBJECT_ID('detailTable')

Parent_column_id = 0 means table scoped constraint, parent_column_id > 0 means column-scoped constraint.

If we try to update the column “master_id”, the constraint prevents the modification:

-- If you try to update master_id it fails UPDATE detailTable SET master_id = master_id + 1 - 1

Msg 547, Level 16, State 0, Line 2 The UPDATE statement conflicted with the CHECK constraint "chk_date". The conflict occurred in database "tempdb", table "dbo.detailTable", column 'master_id'. The statement has been terminated.

What is really surprising is how SQL Server behaves if we decide to make the constraint table-scoped, adding another predicate to the CHECK:

-- Drop the constraint

ALTER TABLE detailTable DROP CONSTRAINT chk_date

GO

-- Create the constraint referencing multiple columns

ALTER TABLE detailTable WITH NOCHECK ADD CONSTRAINT chk_date

CHECK (

DATEADD(day,-1,GETDATE()) > dbo.getMasterDate(master_id)

AND

ISNULL(valueColumn,'') = ISNULL(valueColumn,SPACE(0))

)

GO

As we might expect, it doesn’t work any more:

UPDATE detailTable SET valueColumn = 'New ' + valueColumn

Msg 547, Level 16, State 0, Line 2 The UPDATE statement conflicted with the CHECK constraint "chk_date". The conflict occurred in database "tempdb", table "dbo.detailTable", column 'master_id'. The statement has been terminated.

But, wait: what is REALLY attaching the constraint to the column we are trying to modify? Does a thing such as a “table-scoped” constraint really exist?

We just need to add another column and see how it changes the behaviour of the CHECK constraint:

ALTER TABLE detailTable ADD anotherColumn int

Now, if we try to update the newly created column, since we have a “table-scoped” CHECK constraint, we should get an error:

UPDATE detailTable SET anotherColumn = 1

… but it’s not so!

(3 row(s) affected)

The constraint does not include any reference to “anotherColumn”, so it does not even get executed. If you don’t believe it is so, you can check with Profiler and capture any call to scalard UDFs: you wan’t see any in this case.

This means that what Microsoft calls a table level CHECK constraint is something that does not really exist and a better name for it would be “Multicolumn CHECK constraint”.

The main thing to keep in mind is that if we want the constraint to check the data regardless of the column getting modified we MUST include ALL the columns of the table in the constraint definition.

Oracle: does PARALLEL_DEGREE_LIMIT really limit the DOP?

Understanding Oracle Parallel Execution in 11.2 is a pain, not because the topic itself is overly complex, rather because Oracle made it much more complicated than it needed to be.

Basically, the main initialization parameter that controls the parallel execution is PARALLEL_DEGREE_POLICY.

According to Oracle online documentation, this parameter can be set to:

- MANUAL: Disables automatic degree of parallelism, statement queuing, and in-memory parallel execution. This reverts the behaviour of parallel execution to what it was prior to Oracle Database 11g Release 2 (11.2). This is the default.

- AUTO: Enables automatic degree of parallelism, statement queuing, and in-memory parallel execution.

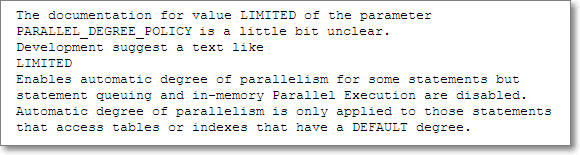

- LIMITED: Enables automatic degree of parallelism for some statements but statement queuing and in-memory Parallel Execution are disabled. Automatic degree of parallelism is only applied to those statements that access tables or indexes decorated explicitly with the PARALLEL clause. Tables and indexes that have a degree of parallelism specified will use that degree of parallelism.

MANUAL

Using the manual degree policy means that tables and indexes decorated with the PARALLEL clause are accessed using the DOP specified on the object (either default or explicit). The default DOP is CPU_COUNT * PARALLEL_THREADS_PER_CPU.

When a query gets parallelized, the physical access plan is sliced into separate threads, called parallel slaves, that, in turn, consume a parallel server each. When all the parallel servers are exhausted (or there are less parallel servers available than the ones requested) the queries start getting downgraded to a lower DOP.

AUTO

This means that Oracle will choose the appropriate DOP to access tables and indexes, based on the size of the objects to read and on the HW resources in the system. Appropriate could also mean 1 (no parallel execution), but, if your tables are quite big, “appropriate” will typically mean default.

Setting this option will also mean that the queries will not be downgraded, but they get queued until all the requested parallel servers are available. This allows complex queries that highly benefit from a parallel plan to execute using all the available resources, but also means that the execution times for the same statement can vary a lot between different runs.

Another interesting feature activated by this setting is the in-memory parallel execution. When using the MANUAL setting, data is read directly from disk and not from the buffer cache. That makes sense, because it does not generate buffer cache latch contention and also because typically parallelism kicks in when scanning big tables that wouldn’t fit in the buffer cache anyway.

However, nowadays server machines are packed with lots of RAM and seeing databases that fit entirely in the buffer cache is not so uncommon. The AUTO degree policy enables reading data blocks directly from the buffer cache when appropriate, which could turn into a great performance enhancement.

LIMITED

The third option was probably meant to be a mix of the first two, but turns out to be the “child of a lesser god”, with a poor, half-backed implementation. The idea behind is to limit the maximum degree of parallelism allowed for a query, using the PARALLEL_DEGREE_LIMIT initialization parameter, that can be set to a numeric value or left to the default (CPU). Moreover, both statement queuing and in-memory parallel execution are disabled, and the automatic DOP is used only when accessing tables explicitly decorated with the PARALLEL clause.

What is really confusing is the scope of the degree limit, which is described very poorly in the documentation.

The “automatic DOP” is a plan scoped attribute and it is turned on only when at least one of the tables accessed by the query is decorated explicitly with the PARALLEL clause. With “PARALLEL”, Oracle means PARALLEL (DEGREE DEFAULT) and not a fixed DOP. When all the tables in the query are decorated with a fixed degree, the automatic DOP is turned off and the limit gets totally ignored. Under these circumstances, each table is accessed using the DOP it is decorated with, which could be higher or lower than the degree limit.

When at least one of the tables in the query is decorated with a default degree, the automatic DOP is turned on and the degree of parallelism will be capped by the PARALLEL_DEGREE_LIMIT. According to Oracle, all tables decorated with an explicit DOP should use that degree of parallelism, but it is not so: when the automatic DOP kicks in, it is always limited by the degree limit, even when accessing a table with a higher explicit DOP.

Seeing is believing:

Let’s try to demonstrate this odd behaviour.

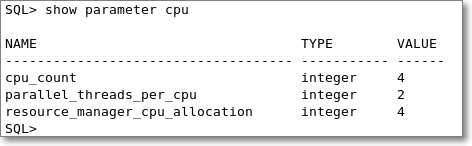

This is the CPU configuration for the instance I will use for the test:

It’s a virtual machine with 4 cores and the instance is configured to use 2 threads per CPU. With this setup, the default DOP is 8.

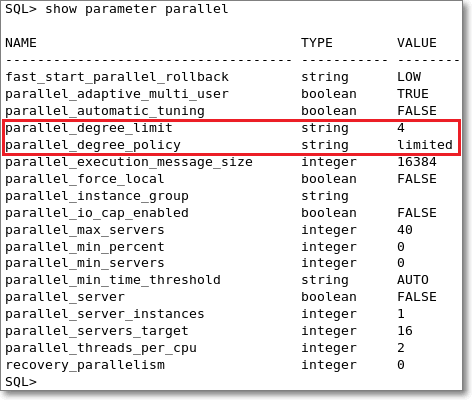

PARALLEL_DEGREE_POLICY is set to “LIMITED” and PARALLEL_DEGREE_LIMIT is set to 4. Both initialization parameters can be set at session scope.

Let’s create a test table holding 10 million rows of data:

CREATE TABLE TenMillionRows AS WITH TenRows AS ( SELECT 1 AS N FROM DUAL UNION ALL SELECT 2 FROM DUAL UNION ALL SELECT 3 FROM DUAL UNION ALL SELECT 4 FROM DUAL UNION ALL SELECT 5 FROM DUAL UNION ALL SELECT 6 FROM DUAL UNION ALL SELECT 7 FROM DUAL UNION ALL SELECT 8 FROM DUAL UNION ALL SELECT 9 FROM DUAL UNION ALL SELECT 10 FROM DUAL ) SELECT ROW_NUMBER() OVER(ORDER BY A.N) AS N, A.N AS A, B.N AS B, C.N AS C, D.N AS D, E.N AS E, F.N AS F, G.N AS G FROM TenRows A CROSS JOIN TenRows B CROSS JOIN TenRows C CROSS JOIN TenRows D CROSS JOIN TenRows E CROSS JOIN TenRows F CROSS JOIN TenRows G;

Also, let’s make sure that the table gets accessed using a parallel plan:

ALTER TABLE TenMillionRows PARALLEL(DEGREE DEFAULT);

To complete the test, we will also need a second test table:

CREATE TABLE HundredMillionRows AS WITH TenRows AS ( SELECT N FROM TenMillionRows WHERE N <= 10 ) SELECT A.* FROM TenMillionRows A CROSS JOIN TenRows B; ALTER TABLE HundredMillionRows PARALLEL(DEGREE DEFAULT);

Now that we have a couple of test tables, we can use them to see how they get accessed by queries.

As a first attempt, we set the limit to ‘CPU’, which means the default DOP (8 in this particular setup).

ALTER SESSION SET PARALLEL_DEGREE_POLICY = 'LIMITED';

ALTER SESSION SET PARALLEL_DEGREE_LIMIT = 'CPU';

SELECT COUNT(*)

FROM HundredMillionRows A

INNER JOIN TenMillionRows B

ON A.N = B.N;

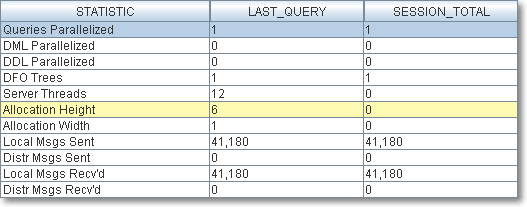

SELECT * FROM v$pq_sesstat;

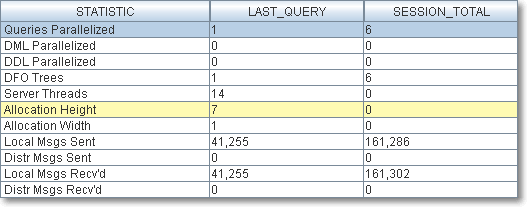

Oracle decided to access the tables using a DOP 7.

Now, if we limit the DOP with a lower PARALLEL_DEGREE_LIMIT, we should see the limit enforced:

ALTER SESSION SET PARALLEL_DEGREE_LIMIT = '4';

SELECT COUNT(*)

FROM HundredMillionRows A

INNER JOIN TenMillionRows B

ON A.N = B.N;

SELECT * FROM v$pq_sesstat;

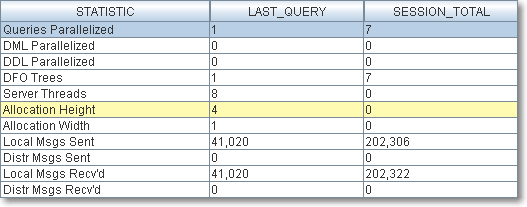

In fact, no surprises here: we get an allocation height of 4 due to the degree limit.

What would happen if we set a fixed degree of parallelism on the tables?

ALTER TABLE HundredMillionRows PARALLEL(DEGREE 6); ALTER TABLE TenMillionRows PARALLEL(DEGREE 3);

With both tables using a fixed DOP, according to the documentation, we should see the explicit DOP used:

SELECT COUNT(*)

FROM HundredMillionRows A

INNER JOIN TenMillionRows B

ON A.N = B.N;

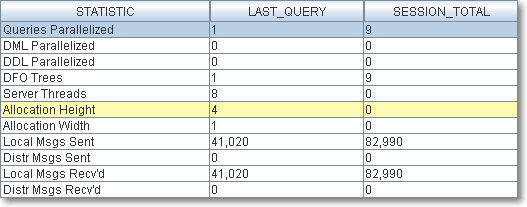

SELECT * FROM v$pq_sesstat;

Once again, no surprises: the degree limit gets ignored because both tables are decorated with an explicit fixed DOP.

However, the true reason behind this behaviour is not the explicit DOP on the tables, but the fact that no other table in the query uses a default DOP.

Let’s see what happens if we change the degree of parallelism for one of the tables:

ALTER TABLE TenMillionRows PARALLEL(DEGREE DEFAULT);

Now, joining HundredMillionRows with TenMillionRows will produce a different allocation height:

SELECT COUNT(*)

FROM HundredMillionRows A

INNER JOIN TenMillionRows B

ON A.N = B.N;

SELECT * FROM v$pq_sesstat;

The reason for this is that the degree limit is enforced only when the automatic DOP kicks in, which happens only when at least one table in the query is decorated with the PARALLEL(DEGREE DEFAULT) clause.

This is really annoying, because the DOP defined on a table does not determine the real DOP used to access that table, which instead is determined by the DOP defined on another table.

This makes the query optimizer behave in a totally unpredictable manner: you get manual DOP when all tables use a fixed parallel clause and you get limited DOP when at least one table uses the parallel default clause.

Workaround:

The only way to really limit the parallelism on all tables is to use the resource manager.

You set it up with:

exec dbms_resource_manager.clear_pending_area(); exec dbms_resource_manager.create_pending_area(); exec dbms_resource_manager.create_plan( plan =>; 'LIMIT_DOP', comment => 'Limit Degree of Parallelism'); exec dbms_resource_manager.create_plan_directive(plan=> 'LIMIT_DOP', group_or_subplan => 'OTHER_GROUPS' , comment => 'limits the parallelism', parallel_degree_limit_p1=> 4); exec dbms_resource_manager.validate_pending_area(); exec dbms_resource_manager.submit_pending_area();

You switch it on with:

alter system set resource_manager_plan = 'LIMIT_DOP' sid='*';

You switch it off with:

alter system reset resource_manager_plan sid='*'; alter system set resource_manager_plan = '' sid='*';

And drop it afterwards with:

exec dbms_resource_manager.clear_pending_area();

exec dbms_resource_manager.create_pending_area();

exec dbms_resource_manager.delete_plan_cascade('LIMIT_DOP')

exec dbms_resource_manager.validate_pending_area();

exec dbms_resource_manager.submit_pending_area();

What Oracle says:

I filed a bug with Oracle support a long time ago and their feedback is, as usual, disappointing.

https://support.oracle.com/CSP/main/article?cmd=show&type=BUG&id=11933336&productFamily=Oracle

I find this reply disappointing for at least three reasons:

- They decided to change the documentation instead of fixing the code

- The text they suggested to fix the documentation is wrong and even more misleading than before

- The documentation has not been changed yet

Conclusions:

Oracle provides three different policies for parallelism: AUTO, MANUAL and LIMITED.

AUTO and MANUAL have their upsides and downsides, but both reflect the behaviour described in the documentation.

LIMITED is a mix of AUTO and MANUAL, but behaves in a very strange and undocumented way and I suggest avoiding it, unless you reset all tables to PARALLEL(DEGREE DEFAULT).

An annoying bug in Database Mail Configuration Wizard

Looks like a sneaky bug made its way from SQL Server 2005 CTP to SQL Server 2008 R2 SP1 almost unnoticed, or, at least, ignored by Microsoft.

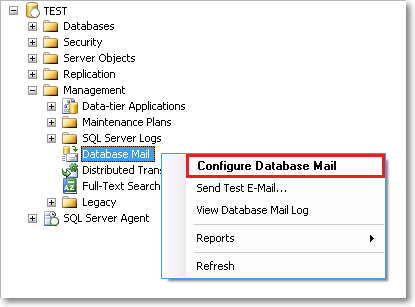

Imagine that you installed a new SQL Server instance (let’s call it “TEST”) and you want Database Mail configured in the same way as your other instances. No problem: you navigate the object explorer to Database Mail, start the wizard and then realize that you don’t remember the parameters to enter.

Not a big deal: you can copy those parameters from the server “PROD” that you configured last year.

You start the wizard on “PROD” and keep this window open to copy the parameter values in the “TEST” dialog.

OK, done? You just have to click “Finish” and… whoops!

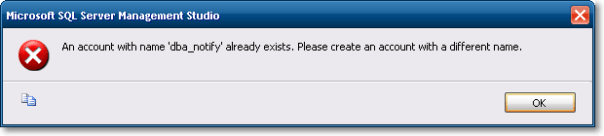

This is the error you get when you try to apply the settings:

Wait: you don’t have a “dba_notify” account on server “TEST” yet. This error message was generated on PROD instead.

Looks like MS developers coded this dialog assuming that just one of these was open at a time and probably used an application-scoped global variable to store the Database Mail settings. Not only: the Database Mail Wizard looses its database context and points to a different instance.

I found a Connect item reporting the issue, dating back to July 2005:

Here is another one from 2006:

I haven’t tried on Denali CTP3 yet, but I would not be surprised if I found it to be still broken.

Until Microsoft decides to fix it, if you want to copy the Database Mail Settings from another server, start the Database Mail Wizard from a separate SSMS instance, or your settings can get totally screwed up.

My stored procedure code template

Do you use code templates in SSMS? I am sure that at least once you happened to click “New stored procedure” in the object explorer context menu.

The default template for this action is a bit disappointing and the only valuable line is “SET NOCOUNT ON”. The rest of the code has to be heavily rewritten or deleted. Even if you use the handy keyboard shortcut for “Specify values for template parameters” (CTRL+SHIFT+M), you end up entering a lot of useless values. For instance, I find it very annoying having to enter stored procedure parameters definitions separately for name, type and default value.

Moreover, one of the questions I see asked over and over in the forums at SqlServerCentral is how to handle transactions and errors in a stored procedure, something that the default template does not.

Long story short, I’m not very happy with the built-in template, so I decided to code my own:

-- =============================================

-- Author: <Author,,Name>

-- Create date: <Create Date,,>

-- Description: <Description,,>

-- =============================================

CREATE PROCEDURE <ProcedureName, sysname, >

AS

BEGIN

SET NOCOUNT ON;

SET XACT_ABORT,

QUOTED_IDENTIFIER,

ANSI_NULLS,

ANSI_PADDING,

ANSI_WARNINGS,

ARITHABORT,

CONCAT_NULL_YIELDS_NULL ON;

SET NUMERIC_ROUNDABORT OFF;

DECLARE @localTran bit

IF @@TRANCOUNT = 0

BEGIN

SET @localTran = 1

BEGIN TRANSACTION LocalTran

END

BEGIN TRY

--Insert code here

IF @localTran = 1 AND XACT_STATE() = 1

COMMIT TRAN LocalTran

END TRY

BEGIN CATCH

DECLARE @ErrorMessage NVARCHAR(4000)

DECLARE @ErrorSeverity INT

DECLARE @ErrorState INT

SELECT @ErrorMessage = ERROR_MESSAGE(),

@ErrorSeverity = ERROR_SEVERITY(),

@ErrorState = ERROR_STATE()

IF @localTran = 1 AND XACT_STATE() <> 0

ROLLBACK TRAN

RAISERROR ( @ErrorMessage, @ErrorSeverity, @ErrorState)

END CATCH

END

This template can be saved in the default path and overwrite the kludgy “New Stored Procedure” built-in template.

Some things to keep in mind:

- I don’t use nested transactions (they’re totally pointless IMHO) and I check for an existing transaction instead.

- The stored procedure will commit/rollback the transaction only if it was started inside the procedure.

- I want every stored procedure to throw the errors it catches. If there’s another calling procedure, it will take care of the errors in the same way.

- This is your computer: you can safely replace <Author, ,Name> with your real name.

- It would really be nice if there was some kind of way to make SSMS fill <Create Date, ,> with the current date. Unfortunately there’s no way. If you are using CVS or some other kind of version control system, this is a nice place for an RCS string such as $Date$

- If you like templates parameters and you heard bad news regarding this feature in the next version of SQL Server (codename Denali), don’t worry: MS fixed it.

Backup all user databases with TDPSQL

Stanislav Kamaletdin (twitter) today asked on #sqlhelp how to backup all user databases with TDP for SQL Server:

My first thought was to use the “*” wildcard, but this actually means all databases, not just user databases.

I ended up adapting a small batch file I’ve been using for a long time to take backups of all user databases with full recovery model:

@ECHO OFF

SQLCMD -E -Q "SET NOCOUNT ON; SELECT name FROM sys.databases WHERE name NOT IN ('master','model','msdb','tempdb')" -h -1 -o tdpsql_input.txt

FOR /F %%A IN (tdpsql_input.txt) DO CALL :perform %%A

GOTO end_batch

:perform

tdpsqlc backup %1 full /configfile=tdpsql.cfg /tsmoptfile=dsm.opt /sqlserver=servername /logfile=tdpsqlc.log

:end_batch

Most of the “trick” is in the SQLCMD line:

- -Q “query” executes the query and returns. I added “SET NOCOUNT ON;” to eliminate the row count from the output.

- -h -1 suppresses the column headers

- -o tdpsql_input.txt redirects the output to a text file

FOR /F %%A IN (tdpsql_input.txt) DO CALL :perform %%A