Blog Archives

Should I check tempdb for corruption?

You all know that checking our databases for corruption regularly is a must. But what about tempdb? Do you need to check it as well?

The maintenance plans that come with SQL Server do not run DBCC CHECKDB on tempdb, which is a strong indicator that it’s a special database and something different is happening behind the scenes. If you think that relying on the behavior of a poor tool such as maintenance plans to make assumptions on internals is a bit far-fetched, well, I see your point. However, you can get more clues by running DBCC CHECKDB against your tempdb and see what it outputs:

DBCC CHECKDB will not check SQL Server catalog or Service Broker consistency because a database snapshot could not be created or because WITH TABLOCK was specified.

See? Something special is happening here: CHECKDB cannot take a database snapshot to run the consistency checks and has to run as if WITH TABLOCK was specified.

Big deal? It depends. Running CHECKDB WITH TABLOCK means that you revert to the SQL2000 behavior, when table locks were taken to perform the consistency checks. This means that you might impact your workload with blocking. Not good.

Well, tempdb is recreated each time you start the instance and doesn’t contain any data worth worrying about, so you don’t need to check if it got corrupt, right? Again, it depends: tempdb might get so corrupted that it could start to throw errors at the sessions using it. It might also get corrupted to a point that triggers a stop of the instance. Not good.

So, if you check tempdb, you get blocking and if you don’t, you can get errors. What’s a reasonable balance?

Don’t check tempdb in the same schedule you use for checking user databases: find a reasonable maintenance window when any blocking issue is acceptable. Probably once a week is enough.

What should you do if you find corruption in tempdb? Don’t restart the instance right away: it could still survive for some time before problems arise. The whole point of checking tempdb is avoiding unpredicted downtime, so if you cycle the instance as soon as you find corruption, you’re killing the only reason to check it. Plan downtime with your users and cycle the instance when it causes less harm to your business.

Moreover, if something corrupted your tempdb, it has likely corrupted your user databases, so make sure you check everything before shutting down the instance (time to take tail-log backups?).

If your tempdb gets corrupted, restarting the instance won’t be enough to make corruption go away: you will have to stop SQL Server, delete the files manually and the start SQL Server to let it create the new tempdb files.

Even if you don’t mind losing the data stored in tempdb, getting corruption is a sign that something went wrong: make sure you investigate the issue and find the root cause. It could be a problem with the I/O subsystem or a malfunctioning RAM module or something else: fix the root cause before it affects the user databases.

Call to action:

Are you checking your tempdb for corruption? If you are not, don’t panic: choose an appropriate schedule and start checking it.

dba_runCHECKDB v2(012)

If you are one among the many that downloaded my consistency check stored procedure called “dba_RunCHECKDB”, you may have noticed a “small” glitch… it doesn’t work on SQL Server 2012!

This is due to the resultset definition of DBCC CHECKDB, which has changed again in SQL Server 2012. Trying to pipe the results of that command in the table definition for SQL Server 2008 produces a column mismatch and it obviously fails.

Fixing the code is very easy indeed, but I could never find the time to post the corrected version until today.

Also, I had to discover the new table definition for DBCC CHECKDB, and it was not just as easy as it used to be in SQL Server 2008. In fact, a couple of days ago I posted a way to discover the new resultset definition working around the cumbersome metadata discovery feature introduced in SQL Server 2012.

Basically, the new output of DBCC CHECKDB now includes 6 new columns:

CREATE TABLE ##DBCC_OUTPUT(

Error int NULL,

[Level] int NULL,

State int NULL,

MessageText nvarchar(2048) NULL,

RepairLevel nvarchar(22) NULL,

Status int NULL,

DbId int NULL, -- was smallint in SQL2005

DbFragId int NULL, -- new in SQL2012

ObjectId int NULL,

IndexId int NULL,

PartitionId bigint NULL,

AllocUnitId bigint NULL,

RidDbId smallint NULL, -- new in SQL2012

RidPruId smallint NULL, -- new in SQL2012

[File] smallint NULL,

Page int NULL,

Slot int NULL,

RefDbId smallint NULL, -- new in SQL2012

RefPruId smallint NULL, -- new in SQL2012

RefFile smallint NULL, -- new in SQL2012

RefPage int NULL,

RefSlot int NULL,

Allocation smallint NULL

)

If you Google the name of one of these new columns, you will probably find a lot of blog posts (no official documentation, unfortunately) that describes the new output of DBCC CHECKDB, but none of them is strictly correct: all of them indicate the smallint columns as int.

Not a big deal, actually, but still incorrect.

I will refrain from posting the whole procedure here: I updated the code in the original post, that you can find clicking here. You can also download the code from the Code Repository.

As usual, suggestions and comments are welcome.

Discovering resultset definition of DBCC commands in SQL Server 2012

Back in 2011 I showed a method to discover the resultset definition of DBCC undocumented commands.

At the time, SQL Server 2012 had not been released yet and nothing suggested that the linked server trick could stop working on the new major version. Surprisingly enough it did.

If you try to run the same code showed in that old post on a 2012 instance, you will get a quite explicit error message:

DECLARE @srv nvarchar(4000);

SET @srv = @@SERVERNAME; -- gather this server name

-- Create the linked server

EXEC master.dbo.sp_addlinkedserver

@server = N'LOOPBACK',

@srvproduct = N'SQLServ', -- it’s not a typo: it can’t be “SQLServer”

@provider = N'SQLNCLI', -- change to SQLOLEDB for SQLServer 2000

@datasrc = @srv;

-- Set the authentication to "current security context"

EXEC master.dbo.sp_addlinkedsrvlogin

@rmtsrvname = N'LOOPBACK',

@useself = N'True',

@locallogin = NULL,

@rmtuser = NULL,

@rmtpassword = NULL;

USE tempdb;

GO

CREATE PROCEDURE loginfo

AS

BEGIN

SET NOCOUNT ON;

DBCC LOGINFO();

END

GO

SELECT *

INTO tempdb.dbo.loginfo_output

FROM OPENQUERY(LOOPBACK, 'SET FMTONLY OFF; EXEC tempdb.dbo.loginfo');

DROP PROCEDURE loginfo;

GO

Msg 11528, Level 16, State 1, Procedure sp_describe_first_result_set, Line 1 The metadata could not be determined because statement 'DBCC LOGINFO();' in procedure 'loginfo' does not support metadata discovery.

This behaviour has to do with the way SQL Server 2012 tries to discover metadata at parse/bind time, when the resultset is not available yet for DBCC commands.

Fortunately, there is still a way to discover metadata when you have a SQL Server instance of a previous version available.

On my laptop I have a 2008R2 instance I can use to query the 2012 instance with a linked server:

-- run this on the 2008R2 instance

USE [master]

GO

EXEC master.dbo.sp_addlinkedserver

@server = N'LOCALHOST\SQL2012'

,@srvproduct = N'SQL Server'

EXEC master.dbo.sp_addlinkedsrvlogin

@rmtsrvname = N'LOCALHOST\SQL2012'

,@useself = N'True'

,@locallogin = NULL

,@rmtuser = NULL

,@rmtpassword = NULL

GO

SELECT *

INTO tempdb.dbo.loginfo_output

FROM OPENQUERY([LOCALHOST\SQL2012], 'SET FMTONLY OFF; EXEC tempdb.dbo.loginfo');

GO

This code pipes the results of the DBCC command into a table in the tempdb database in my 2008R2 instance. The table can now be scripted using SSMS:

Using the 2008R2 instance as a “Trojan Horse” for the metadata discovery, you can see that the resultset definition of DBCC LOGINFO() has changed again in SQL Server 2012:

CREATE TABLE [dbo].[loginfo_output]( [RecoveryUnitId] [int] NULL, -- new in SQL2012 [FileId] [int] NULL, [FileSize] [bigint] NULL, [StartOffset] [bigint] NULL, [FSeqNo] [int] NULL, [Status] [int] NULL, [Parity] [tinyint] NULL, [CreateLSN] [numeric](25, 0) NULL )

This trick will be particularly useful for an upcoming (and long overdue) post, so… stay tuned!

Setting up an e-mail alert for DBCC CHECKDB errors

Some months ago I posted a script on a SQLServerCentral forum to help a member automating the execution of DBCC CHECKDB and send and e-mail alert in case a consistency error is found.

The original thread can be found here.

I noticed that many people are actually using that script and I also got some useful feedback on the code itself, so I decided to write this post to make an enhanced version available to everyone.

The Problem

Your primary responsibility as a DBA is to safeguard your data with backups. I mean intact backups! Keep in mind that when you back up a corrupt database, you will also restore a corrupt database.

A task that checks the database integrity should be part of your backup strategy and you should be notified immediately when corruption is found.

Unfortunately, the built-in consistency check Maintenance Task does not provide an alerting feature and you have to code it yourself.

The Solution

SQL Server 2000 and above accept the “WITH TABLERESULTS” option for most DBCC commands to output the messages as a result set. Those results can be saved to a table and processed to identify messages generated by corrupt data and raise an alert.

If you don’t know how to discover the resultset definition of DBCC CHECKDB WITH TABLERESULTS, I suggest that you take a look at this post.

Here is the complete code of the stored procedure I am using on my production databases:

| -- https://spaghettidba.com/2011/11/28/email-alert-dbcc-checkdb/ | |

| -- You have a TOOLS database, don't you? | |

| -- If not, create it: you'll thank me later. | |

| USE TOOLS; | |

| GO | |

| IF NOT EXISTS( SELECT 1 FROM sys.schemas WHERE name = 'maint') | |

| EXEC('CREATE SCHEMA maint'); | |

| GO | |

| -- ============================================= | |

| -- Author: Gianluca Sartori - spaghettidba | |

| -- Create date: 2011-06-30 | |

| -- Description: Runs DBCC CHECKDB on the database(s) specified | |

| -- and returns a table result that can be used in | |

| -- reporting and alerting. | |

| -- ============================================= | |

| CREATE PROCEDURE [maint].[dba_runCHECKDB] | |

| @dbName nvarchar(max) = NULL, -- Database name. If NULL, will check all databases | |

| @PHYSICAL_ONLY bit = 0, -- Set to 1 to perform physical check only. Defaults to 0. | |

| @allMessages bit = 0, -- Set to 1 to return all the messages generated by DBCC | |

| -- Set to 0 to return one summary message for each database (default) | |

| @dbmail_profile sysname = NULL, -- DBMail profile to use when sending the results | |

| @dbmail_recipient sysname = NULL, -- DBMail recipient | |

| @log_to_table bit = 0, -- Set to 1 to enable logging to table DBCC_CHECKDB_HISTORY | |

| @help bit = 0 | |

| AS | |

| BEGIN | |

| SET NOCOUNT, | |

| XACT_ABORT, | |

| QUOTED_IDENTIFIER, | |

| ANSI_NULLS, | |

| ANSI_PADDING, | |

| ANSI_WARNINGS, | |

| ARITHABORT, | |

| CONCAT_NULL_YIELDS_NULL ON; | |

| SET NUMERIC_ROUNDABORT OFF; | |

| IF @help = 1 | |

| BEGIN | |

| SELECT * | |

| FROM ( | |

| SELECT '@dbname nvarchar(max) = NULL','Database name.' | |

| UNION ALL SELECT '','''Database'' checks a single database' | |

| UNION ALL SELECT '','NULL checks all databases' | |

| UNION ALL SELECT '','''Database1,Database2,...DatabaseN'' checks the databases in the list' | |

| UNION ALL SELECT '','''^Database1,^Database2,...^DatabaseN'' checks all databases except the ones in the list' | |

| UNION ALL SELECT '','Combinations of positive (not prefixed with ^) and negative (prefixed with ^) elements' | |

| UNION ALL SELECT '','considers positive elements only. Use negative elements alone.' | |

| UNION ALL SELECT '','' | |

| UNION ALL SELECT '@PHYSICAL_ONLY bit = 0','If set to 1, performs CHECKDB WITH PHYSICAL_ONLY' | |

| UNION ALL SELECT '','' | |

| UNION ALL SELECT '@allMessages bit = 0','If set to 1, returns all the messages generated by DBCC' | |

| UNION ALL SELECT '','If set to 0, returns a summary message for each database' | |

| UNION ALL SELECT '','' | |

| UNION ALL SELECT '@dbmail_profile sysname = NULL','The dbmail profile to use to send out the email.' | |

| UNION ALL SELECT '','NULL uses the global dbmail profile' | |

| UNION ALL SELECT '','' | |

| UNION ALL SELECT '@dbmail_recipient sysname = NULL','The address that will receive the email.' | |

| UNION ALL SELECT '','If set to NULL disables sending the email and returns a result set.' | |

| UNION ALL SELECT '','If not NULL sends the email without returning a result set' | |

| UNION ALL SELECT '','' | |

| UNION ALL SELECT '@log_to_table bit = 0','If set to 1, stores the results in the table DBCC_CHECKDB_HISTORY.' | |

| UNION ALL SELECT '','If the table does not exists, it will create the table for you.' | |

| UNION ALL SELECT '','When logging to a table, a result set is not returned.' | |

| UNION ALL SELECT '','' | |

| UNION ALL SELECT '@help bit = 0','If set to 1 displays this help.' | |

| ) AS h ( | |

| [-----parameter------------------------------------------------------] | |

| ,[-----description----------------------------------------------------------------------------------------------------------------] | |

| ) | |

| RETURN | |

| END | |

| DECLARE @version int | |

| DECLARE @sql nvarchar(4000) | |

| DECLARE @ErrorMessage nvarchar(2048) | |

| DECLARE @body nvarchar(max) | |

| DECLARE @Message nvarchar(4000) | |

| DECLARE @Severity int | |

| DECLARE @State int | |

| -- determine major version: DBCC output can be different | |

| SELECT @version = CAST(REPLACE(CAST(SERVERPROPERTY('ProductVersion') AS char(2)),'.','') AS int) | |

| -- Clean up the @dbname parameter | |

| -- Remove leading/trailing spaces | |

| SET @dbname = LTRIM(RTRIM(@dbname)) | |

| -- Remove leading commas | |

| WHILE LEFT(@dbname,1) = ',' | |

| SET @dbname = SUBSTRING(@dbname, 2, LEN(@dbname)) | |

| -- Remove trailing commas | |

| WHILE RIGHT(@dbname,1) = ',' | |

| SET @dbname = SUBSTRING(@dbname, 1, LEN(@dbname)-1) | |

| IF OBJECT_ID('tempdb..##DBCC_OUTPUT') IS NOT NULL | |

| DROP TABLE ##DBCC_OUTPUT | |

| -- SQL2005, SQL2008, SQL2008R2 produce this output | |

| -- except for the columns explicitly marked as new in SQL 2012 | |

| CREATE TABLE ##DBCC_OUTPUT( | |

| Error int NULL, | |

| [Level] int NULL, | |

| State int NULL, | |

| MessageText nvarchar(2048) NULL, | |

| RepairLevel nvarchar(22) NULL, | |

| Status int NULL, | |

| DbId int NULL, -- was smallint in SQL2005 | |

| DbFragId int NULL, -- new in SQL2012 | |

| ObjectId int NULL, | |

| IndexId int NULL, | |

| PartitionId bigint NULL, | |

| AllocUnitId bigint NULL, | |

| RidDbId smallint NULL, -- new in SQL2012 | |

| RidPruId smallint NULL, -- new in SQL2012 | |

| [File] smallint NULL, | |

| Page int NULL, | |

| Slot int NULL, | |

| RefDbId smallint NULL, -- new in SQL2012 | |

| RefPruId smallint NULL, -- new in SQL2012 | |

| RefFile smallint NULL, | |

| RefPage int NULL, | |

| RefSlot int NULL, | |

| Allocation smallint NULL | |

| ) | |

| -- Add a computed column | |

| ALTER TABLE ##DBCC_OUTPUT ADD Outcome AS | |

| CASE | |

| WHEN Error = 8989 AND MessageText LIKE '%0 allocation errors and 0 consistency errors%' THEN 0 | |

| WHEN Error <> 8989 THEN NULL | |

| ELSE 1 | |

| END | |

| -- Add an identity column to sort results when sending the email | |

| ALTER TABLE ##DBCC_OUTPUT ADD RowId int IDENTITY(1,1) | |

| DECLARE @localTran bit | |

| IF @@TRANCOUNT = 0 | |

| BEGIN | |

| SET @localTran = 1 | |

| BEGIN TRANSACTION LocalTran | |

| END | |

| BEGIN TRY | |

| -- Create the history table if needed | |

| IF @log_to_table = 1 | |

| AND OBJECT_ID('maint.DBCC_CHECKDB_HISTORY') IS NULL | |

| BEGIN | |

| SELECT TOP(0) | |

| RowId, | |

| Error, | |

| [Level], | |

| State, | |

| MessageText, | |

| RepairLevel, | |

| Status, | |

| DbId, | |

| DbFragId, | |

| CAST(NULL AS sysname) AS DatabaseName, | |

| ObjectId, | |

| IndexId, | |

| PartitionId, | |

| AllocUnitId, | |

| RidDbId, | |

| RidPruId, | |

| [File], | |

| Page, | |

| Slot, | |

| RefDbId, | |

| RefPruId, | |

| RefFile, | |

| RefPage, | |

| RefSlot, | |

| Allocation, | |

| Outcome, | |

| GETDATE() AS RunDate | |

| INTO maint.DBCC_CHECKDB_HISTORY | |

| FROM ##DBCC_OUTPUT | |

| ALTER TABLE maint.DBCC_CHECKDB_HISTORY | |

| ADD CONSTRAINT PK_DBCC_CHECKDB_HISTORY PRIMARY KEY CLUSTERED(RowId) | |

| END | |

| -- Open a cursor on the matching databases (version dependant) | |

| DECLARE c_databases CURSOR LOCAL FAST_FORWARD | |

| FOR | |

| SELECT QUOTENAME(name) AS name | |

| FROM master.sys.databases | |

| WHERE ( | |

| @dbname IS NULL | |

| OR ',' + @dbname + ',' NOT LIKE '%,[^!^]%' ESCAPE '!' | |

| OR ',' + @dbname + ',' LIKE '%,' + name + ',%' | |

| OR ',' + @dbname + ',' LIKE '%,' + REPLACE(QUOTENAME(name),'[','[[]') + ',%' | |

| ) | |

| AND ',' + ISNULL(@dbname,'') + ',' NOT LIKE '%,!^' + name + ',%' ESCAPE '!' | |

| AND ',' + ISNULL(@dbname,'') + ',' NOT LIKE '%,!^' + REPLACE(QUOTENAME(name),'[','[[]') + ',%' ESCAPE '!' | |

| AND state_desc = 'ONLINE' | |

| AND is_read_only = 0 | |

| AND source_database_id IS NULL -- Exclude Snapshots | |

| AND name <> 'tempdb' | |

| OPEN c_databases | |

| FETCH NEXT FROM c_databases INTO @dbName | |

| WHILE @@FETCH_STATUS = 0 | |

| BEGIN | |

| -- Build a SQL string | |

| SET @sql = 'DBCC CHECKDB('+ @dbName +') WITH TABLERESULTS, ALL_ERRORMSGS ' | |

| IF @PHYSICAL_ONLY = 1 | |

| SET @sql = @sql + ', PHYSICAL_ONLY ' | |

| BEGIN TRY | |

| IF @version > 8 AND @version < 11 | |

| BEGIN | |

| -- SQL2005/2008 use this column list | |

| INSERT INTO ##DBCC_OUTPUT ( | |

| Error, | |

| [Level], | |

| State, | |

| MessageText, | |

| RepairLevel, | |

| Status, | |

| DbId, | |

| ObjectId, | |

| IndexId, | |

| PartitionId, | |

| AllocUnitId, | |

| [File], | |

| Page, | |

| Slot, | |

| RefFile, | |

| RefPage, | |

| RefSlot, | |

| Allocation | |

| ) | |

| EXEC(@sql) | |

| END | |

| IF @version >= 11 | |

| BEGIN | |

| -- SQL2012 uses all columns | |

| INSERT INTO ##DBCC_OUTPUT ( | |

| Error, | |

| [Level], | |

| State, | |

| MessageText, | |

| RepairLevel, | |

| Status, | |

| DbId, | |

| DbFragId, | |

| ObjectId, | |

| IndexId, | |

| PartitionId, | |

| AllocUnitId, | |

| RidDbId, | |

| RidPruId, | |

| [File], | |

| Page, | |

| Slot, | |

| RefDbId, | |

| RefPruId, | |

| RefFile, | |

| RefPage, | |

| RefSlot, | |

| Allocation | |

| ) | |

| EXEC(@sql) | |

| END | |

| END TRY | |

| BEGIN CATCH | |

| SELECT @ErrorMessage = 'Unable to run DBCC on database ' + @dbName + ': ' + ERROR_MESSAGE() | |

| INSERT INTO ##DBCC_OUTPUT (Error, MessageText) | |

| SELECT Error = 8989, | |

| MessageText = @ErrorMessage | |

| END CATCH | |

| FETCH NEXT FROM c_databases INTO @dbName | |

| END | |

| CLOSE c_databases | |

| DEALLOCATE c_databases | |

| IF NOT EXISTS ( | |

| SELECT 1 FROM ##DBCC_OUTPUT | |

| ) | |

| BEGIN | |

| RAISERROR('No database matches the name specified.',10,1) | |

| END | |

| IF @log_to_table = 1 | |

| BEGIN | |

| INSERT INTO maint.DBCC_CHECKDB_HISTORY ( | |

| Error, | |

| [Level], | |

| State, | |

| MessageText, | |

| RepairLevel, | |

| Status, | |

| DbId, | |

| DbFragId, | |

| DatabaseName, | |

| ObjectId, | |

| IndexId, | |

| PartitionId, | |

| AllocUnitId, | |

| RidDbId, | |

| RidPruId, | |

| [File], | |

| Page, | |

| Slot, | |

| RefDbId, | |

| RefPruId, | |

| RefFile, | |

| RefPage, | |

| RefSlot, | |

| Allocation, | |

| Outcome, | |

| RunDate | |

| ) | |

| SELECT | |

| Error, | |

| [Level], | |

| State, | |

| MessageText, | |

| RepairLevel, | |

| Status, | |

| DbId, | |

| DbFragId, | |

| DatabaseName = ISNULL(DB_NAME(DbId),'resourcedb'), | |

| ObjectId, | |

| IndexId, | |

| PartitionId, | |

| AllocUnitId, | |

| RidDbId, | |

| RidPruId, | |

| [File], | |

| Page, | |

| Slot, | |

| RefDbId, | |

| RefPruId, | |

| RefFile, | |

| RefPage, | |

| RefSlot, | |

| Allocation, | |

| Outcome, | |

| RunDate = GETDATE() | |

| FROM ##DBCC_OUTPUT | |

| WHERE Error = 8989 | |

| OR @allMessages = 1 | |

| OR DbId IN ( | |

| SELECT DbId | |

| FROM ##DBCC_OUTPUT | |

| WHERE Error = 8989 | |

| AND Outcome = 1 | |

| ) | |

| END | |

| -- Build the final SQL statement | |

| SET @sql = | |

| 'SELECT ISNULL(DB_NAME(DbId),''resourcedb'') AS DatabaseName, ' + | |

| CASE @allMessages | |

| WHEN 1 THEN '*' | |

| ELSE 'MessageText, Outcome' | |

| END + ' | |

| FROM ##DBCC_OUTPUT | |

| WHERE 1 = 1 ' + | |

| CASE @allMessages WHEN 1 THEN '' ELSE 'AND Error = 8989' END | |

| IF @dbmail_recipient IS NULL | |

| BEGIN | |

| -- Query DBCC output directly | |

| EXEC(@sql) | |

| END | |

| ELSE | |

| BEGIN | |

| -- Pipe DBCC output to a variable | |

| SET @sql = ' | |

| SELECT @body = ( | |

| SELECT ISNULL(MessageText,'''') + char(10) AS [text()] | |

| FROM ( ' + @sql + ' AND Error = 8989 ) AS src | |

| WHERE Outcome = 1 | |

| ORDER BY 1 DESC | |

| FOR XML PATH('''') | |

| )' | |

| EXEC sp_executesql @sql, N'@body nvarchar(max) OUTPUT', @body OUTPUT | |

| -- Send CHECKDB report | |

| IF @body IS NOT NULL | |

| BEGIN | |

| EXEC msdb.dbo.sp_send_dbmail | |

| @profile_name = @dbmail_profile, | |

| @recipients = @dbmail_recipient, | |

| @subject = 'Consistency error found!', | |

| @body = @body, | |

| @importance = 'High', | |

| @query = ' | |

| SET NOCOUNT ON; | |

| SELECT TOP(5000) ISNULL(DB_NAME(DbId),''resourcedb'') + '' -'' AS DatabaseName, MessageText | |

| FROM ##DBCC_OUTPUT WITH (NOLOCK) | |

| WHERE DbId IN ( | |

| SELECT DbId | |

| FROM ##DBCC_OUTPUT WITH (NOLOCK) | |

| WHERE Error = 8989 | |

| AND Outcome = 1 | |

| ) | |

| ORDER BY RowId ASC ', | |

| @attach_query_result_as_file = 1, | |

| @query_result_no_padding = 1, | |

| @query_result_header = 0, | |

| @exclude_query_output = 1, | |

| @query_attachment_filename = 'DBCC_CHECKDB_Errors.log' | |

| END | |

| END | |

| IF @localTran = 1 AND XACT_STATE() = 1 | |

| COMMIT TRAN LocalTran | |

| IF OBJECT_ID('tempdb..##DBCC_OUTPUT') IS NOT NULL | |

| DROP TABLE ##DBCC_OUTPUT | |

| END TRY | |

| BEGIN CATCH | |

| SELECT @Message = ERROR_MESSAGE(), | |

| @Severity = ERROR_SEVERITY(), | |

| @State = ERROR_STATE() | |

| IF @localTran = 1 AND XACT_STATE() <> 0 | |

| ROLLBACK TRAN | |

| RAISERROR ( @Message, @Severity, @State) | |

| END CATCH | |

| END |

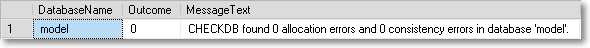

Once the stored procedure is ready, you can run it against the desired databases:

EXEC [maint].[dba_runCHECKDB] @dbName = 'model', @PHYSICAL_ONLY = 0, @allmessages = 0

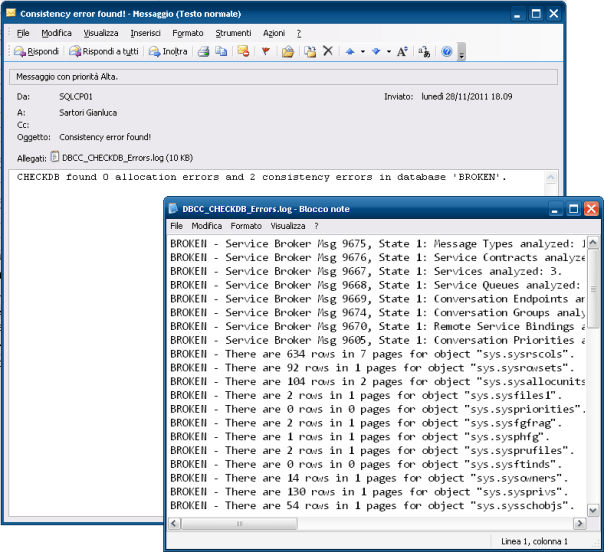

Setting up an e-mail alert

In order to receive an e-mail alert, you can use a SQL Agent job and schedule this script to run every night, or whenever you find appropriate.

EXEC [maint].[dba_runCHECKDB]

@dbName = NULL,

@PHYSICAL_ONLY = 0,

@allmessages = 0,

@dbmail_profile = 'DBA_profile',

@dbmail_recipient = 'dba@mycompany.com'

The e-mail message generated by the stored procedure contains the summary outcome and a detailed log, attached as a text file:

Logging to a table

If needed, you can save the output of this procedure to a history table that logs the outcome of DBCC CHECKDB in time:

-- Run the stored procedure with @log_to_table = 1

EXEC TOOLS.maint.dba_runCHECKDB

@dbName = NULL,

@PHYSICAL_ONLY = 0,

@allMessages = 0,

@log_to_table = 1

-- Query the latest results

SELECT *

FROM (

SELECT *, RN = ROW_NUMBER() OVER (PARTITION BY DBId ORDER BY RunDate DESC)

FROM DBCC_CHECKDB_HISTORY

WHERE Outcome IS NOT NULL

) AS dbcc_history

WHERE RN = 1

When invoked with the @log_to_table parameter for the first time, the procedure creates a log table that will be used to store the results. Subsequent executions will append to the table.

No excuses!

The web is full of blogs, articles and forums on how to automate DBCC CHECKDB. If your data has any value to you, CHECKDB must be part of your maintenance strategy.

Run! Check the last time you performed a successful CHECKDB on your databases NOW! Was it last year? You may be in big trouble.