Blog Archives

Please Throw this Hardware at the Problem

We’re being told over and over that “throwing hardware at the problem” is not the correct solution for performance problems and a 2x faster server will not make our application twice as fast. Quite true, but there’s one thing that we can do very easily without emptying the piggy bank and won’t hurt for sure: buying more RAM.

The price for server-class RAM has dropped so dramatically that today you can buy a 16 GB module for around € 200. Depending on the architecture of your server, you could max out the memory supported by a standard edition (128 GB) with just € 1,600.

Isn’t it a reasonable price for better performance?

Let’s put it in perspective: you decide that throwing hardware at the problem is not right, so you hire a consultant to tune performance. How many days of his work can you buy with € 1,600? Two? One? How much tuning work will he do in one or two days? Do you want him to work on the core issues or fight with memory pressure? RAM is cheap, consultants are not: make them work on what really matters for you.

Does adding RAM always make sense? No: if your server has 4 GB of data and 16 GB of RAM, there’s no need to add more RAM and if you do you won’t see any improvement. On the other hand, if you have 100 GB of data and 32 GB of RAM, you should be upgrading right away. Isn’t your business worth € 1.600? You’re probably paying much more for SQL Server licensing, so why waste your money on a license that has to run on an underpowered machine? RAM is cheap, SQL Server licenses are not: let your license work on a good machine.

Will it make a huge difference? It depends: if the server is already under heavy memory pressure, you will see a big improvement. Even if memory pressure is not the worst issue on your server, you will see a big difference for those queries that access data not used frequently: if you don’t have enough RAM, that data will probably live outside the buffer cache and will have to be read from disk. RAM is cheap, faster disks are incredibly expensive: let the RAM work and the disks sit idle.

That said, what I see in the wild is lots and lots of production servers with 8 GB of RAM or less. Many of those servers have been installed a few years ago, when RAM was pricier than today, but there’s no reason why you shouldn’t upgrade them now. Upgrading the CPU has an impact on the SQL Server licensing, while adding more RAM doesn’t require additional licensing fees. See? RAM is cheap.

On the other hand, we have many servers running on virtual machines at hosting/cloud facilities, where you pay for machine specs. Something has to change here as well: hosting companies are offering too few RAM in their machines and the configurations with more memory are crazily expensive. Dear hosting/cloud companies, open your eyes: RAM is ridiculously cheap!

Call to action:

Did I mention that RAM is cheap? Check your servers: are they running on less than € 200 of RAM? It’s time to upgrade NOW!

How to Eat a SQL Elephant in 10 Bites

Sometimes, when you have to optimize a poor performing query, you may find yourself staring at a huge statement, wondering where to start.

Some developers think that a single elephant statement is better than multiple small statements, but this is not always the case.

Let’s try to look from the perspective of software quality:

- Efficiency

The optimizer will likely come up with a suboptimal plan, giving up early on optimizations and transformations. - Reliability

Any slight change in statistics could lead the optimizer to produce a different and less efficient plan. - Maintainability

A single huge statement is less readable and maintainable than multiple small statements.

With those points in mind, the only sensible thing to do is cut the elephant into smaller pieces and eat them one at a time.

This is how I do it:

- Lay out the original code and read the statement carefully

- Decide whether a full rewrite is more convenient

- Set up a test environment

- Identify the query parts

- Identify the main tables

- Identify non correlated subqueries and UNIONs

- Identify correlated subqueries

- Write a query outline

- Break the statement into parts with CTEs, views, functions and temporary tables

- Merge redundant subqueries

- Put it all together

- Verify the output based on multiple different input values

- Comment your work thoroughly

1. Lay out the original code and read the statement carefully

Use one of the many SQL formatters you can find online. My favorite one is Tao Klerk’s Poor Man’s T-SQL Formatter: it’s very easy to use and configure and it comes with a handy SSMS add-in and plugins for Notepad++ and WinMerge. Moreover, it’s free and open source. A must-have.

Once your code is readable, don’t rush to the keyboard: take your time and read it carefully.

- Do you understand (more or less) what it is supposed to do?

- Do you think you could have coded it yourself?

- Do you know all the T-SQL constructs it contains?

If you answered “yes” to all the above, you’re ready to go to the next step.

2. Decide whether a full rewrite is more convenient

OK, that code sucks and you have to do something. It’s time to make a decision:

- Take the business rules behind the statement and rewrite it from scratch

When the statement is too complicated and unreadable, it might be less time-consuming to throw the old statement away and write your own version.

Usually it is quite easy when you know exactly what the code is supposed to do. Just make sure you’re not making wrong assumptions and be prepared to compare your query with the original one many times. - Refactor the statement

When the business rules are unclear (or unknown) starting from scratch is not an option. No, don’t laugh! The business logic may have been buried in the sands of time or simply you may be working on a query without any will to understand the business processes behind it.

Bring a big knife: you’re going to cut the elephant in pieces. - Leave the statement unchanged

Sometimes the statement is too big or too complicated to bother taking the time to rewrite it. For instance, this query would take months to rewrite manually.

It works? Great: leave it alone.

3. Set up a test environment

It doesn’t matter how you decide to do it: at the end of the day you will have to compare the results of your rewritten query with the results of the “elephant” and make sure you did not introduce errors in your code.

The best way to do this is to prepare a script that compares the results of the original query with the results of your rewritten version. This is the script I am using (you will find it in the code repository, as usual).

-- =============================================

-- Author: Gianluca Sartori - spaghettidba

-- Create date: 2012-03-14

-- Description: Runs two T-SQL statements and

-- compares the results

-- =============================================

-- Drop temporary tables

IF OBJECT_ID('tempdb..#original') IS NOT NULL

DROP TABLE #original;

IF OBJECT_ID('tempdb..#rewritten') IS NOT NULL

DROP TABLE #rewritten;

-- Store the results of the original

-- query into a temporary table

WITH original AS (

<original, text, >

)

SELECT *

INTO #original

FROM original;

-- Add a sort column

ALTER TABLE #original ADD [______sortcolumn] int identity(1,1);

-- Store the results of the rewritten

-- query into a temporary table

WITH rewritten AS (

<rewritten, text, >

)

SELECT *

INTO #rewritten

FROM rewritten;

-- Add a sort column

ALTER TABLE #rewritten ADD [______sortcolumn] int identity(1,1);

-- Compare the results

SELECT 'original' AS source, *

FROM (

SELECT *

FROM #original

EXCEPT

SELECT *

FROM #rewritten

) AS A

UNION ALL

SELECT 'rewritten' AS source, *

FROM (

SELECT *

FROM #rewritten

EXCEPT

SELECT *

FROM #original

) AS B;

The script is a SSMS query template that takes the results of the original and the rewritten query and compares the resultsets, returning all the missing or different rows. The script uses two CTEs to wrap the two queries: this means that the ORDER BY predicate (if any) will have to be moved outside the CTE.

Also, the results of the two queries are piped to temporary tables, which means that you can’t have duplicate column names in the result set.

Another thing worth noting is that the statements to compare cannot be stored procedures. One simple way to overcome this limitation is to use the technique I described in this post.

The queries inside the CTEs should then be rewritten as:

SELECT * FROM OPENQUERY(LOOPBACK,'<original, text,>')

Obviously, all the quotes must be doubled, which is the reason why I didn’t set up the script this way in the first place. It’s annoying, but it’s the only way I know of to pipe the output of a stored procedure into a temporary table without knowing the resultset definition in advance. If you can do better, suggestions are always welcome.

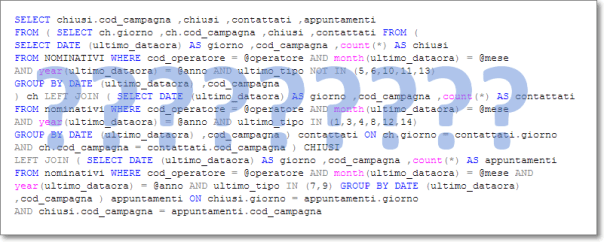

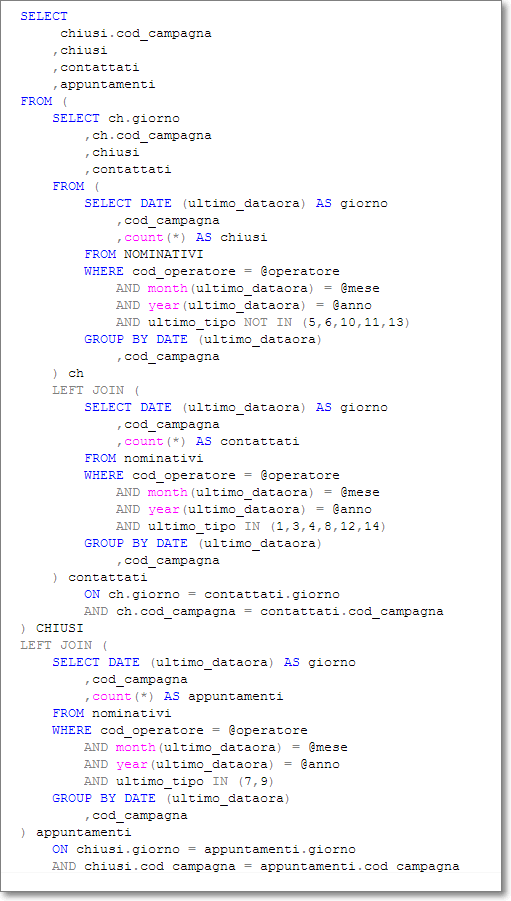

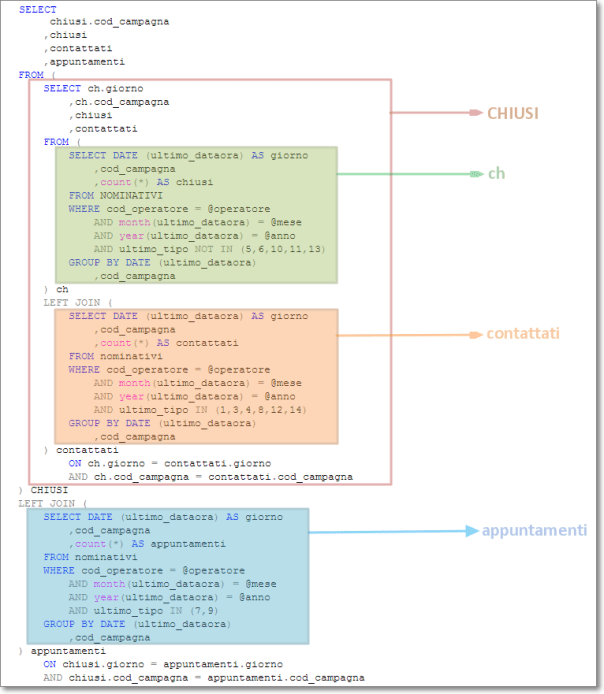

4. Identify the query parts

OK, now you have everything ready and you can start eating the elephant. The first thing to do is to identify all the autonomous blocks in the query and give them a name. You can do this at any granularity and repeat the task as many times as you like: the important thing is that at the end of this process you have a list of query parts and a name for each part.

Identify the main tables

Usually I like the idea that the data comes from one “main” table and all the rest comes from correlated tables. For instance, if I have to return a resultset containing some columns from the “SalesOrderHeader” table and some columns from the “SalesOrderDetail” table, I consider SalesOrderHeader the main table and SalesOrderHeader a correlated table. It fits well with my mindset, but you are free to see things the way you prefer.

Probably these tables are already identified by an alias: note down the aliases and move on.

Identify non correlated subqueries and UNIONs

Non-correlated subqueries are considered as inline views. Often these subqueries are joined to the main tables to enrich the resultset with additional columns.

Don’t be scared away by huge subqueries: you can always repeat all the steps for any single subquery and rewrite it to be more compact and readable.

Again, just note down the aliases and move to the next step.

Identify correlated subqueries

Correlated subqueries are not different from non-correlated subqueries, with the exception that you will have less freedom to move them from their current position in the query. However, that difference doesn’t matter for the moment: give them a name and note it down.

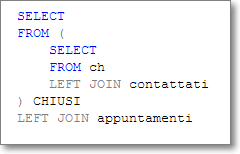

5. Write a query outline

Use the names you identified in the previous step and write a query outline. It won’t execute, but it gives you the big picture.

If you really want the big picture, print the query. It may seem crazy, but sometimes I find it useful to be able to see the query as a whole, with all the parts with their names highlighted in different colors.

Yes, that’s a single SELECT statement, printed in Courier new 8 pt. on 9 letter sheets, hanging on the wall in my office.

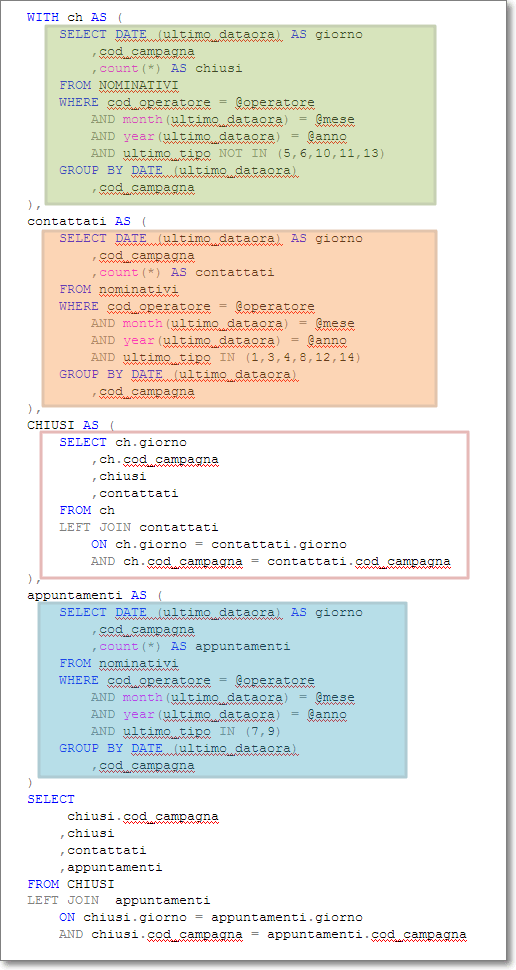

6. Break the statement in parts with CTEs, views, functions and temporary tables

SQL Server offers a fair amount of tools that allow breaking a single statement into parts:

- Common Table Expressions

- Subqueries

- Views

- Inline Table Valued Functions

- Multi-Statement Table Valued Functions

- Stored procedures

- Temporary Tables

- Table Variables

Ideally, you will choose the one that performs best in your scenario, but you could also take usability and modularity into account.

CTEs and subqueries are a good choice when the statement they contain is not used elsewhere and there is no need to reuse that code.

Table Valued functions and views, on the contrary, are most suitable when there is an actual need to incapsulate the code in modules to be reused in multiple places.

Generally speaking, you will use temporary tables or table variables when the subquery gets used more than once in the statement, thus reducing the load.

Though I would really like to go into deeper details on the performance pros and cons of each construct, that would take an insane amount of time and space. You can find a number of articles and blogs on those topics and I will refrain from echoing them here.

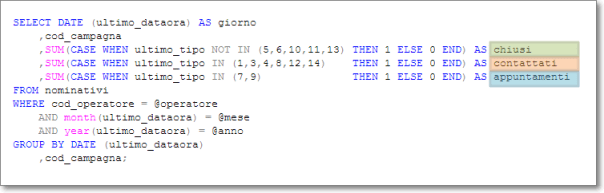

7. Merge redundant subqueries

Some parts of your query may be redundant and you may have the opportunity to merge those parts. The merged query will be more compact and will likely perform significantly better.

For instance, you could have multiple subqueries that perform aggregate calculations on the same row set:

SELECT ProductID

,Name

,AverageSellOutPrice = (

SELECT AVG(UnitPrice)

FROM Sales.SalesOrderDetail

WHERE ProductID = PR.ProductID

)

,MinimumSellOutPrice = (

SELECT MIN(UnitPrice)

FROM Sales.SalesOrderDetail

WHERE ProductID = PR.ProductID

)

,MaximumSellOutPrice = (

SELECT MAX(UnitPrice)

FROM Sales.SalesOrderDetail

WHERE ProductID = PR.ProductID

)

FROM Production.Product AS PR;

The above query can be rewritten easily to avoid hitting the SalesOrderDetail table multiple times:

SELECT ProductID

,Name

,AverageSellOutPrice

,MinimumSellOutPrice

,MaximumSellOutPrice

FROM Production.Product AS PR

CROSS APPLY (

SELECT AVG(UnitPrice), MIN(UnitPrice), MAX(UnitPrice)

FROM Sales.SalesOrderDetail

WHERE ProductID = PR.ProductID

) AS SellOuPrices (AverageSellOutPrice, MinimumSellOutPrice, MaximumSellOutPrice);

Another typical situation where you can merge some parts is when multiple subqueries perform counts on slightly different row sets:

SELECT ProductID

,Name

,OnlineOrders = (

SELECT COUNT(*)

FROM Sales.SalesOrderHeader AS SOH

WHERE SOH.OnlineOrderFlag = 1

AND EXISTS (

SELECT *

FROM Sales.SalesOrderDetail

WHERE SalesOrderID = SOH.SalesOrderID

AND ProductID = PR.ProductID

)

)

,OfflineOrders = (

SELECT COUNT(*)

FROM Sales.SalesOrderHeader AS SOH

WHERE SOH.OnlineOrderFlag = 0

AND EXISTS (

SELECT *

FROM Sales.SalesOrderDetail

WHERE SalesOrderID = SOH.SalesOrderID

AND ProductID = PR.ProductID

)

)

FROM Production.Product AS PR;

The only difference between the two subqueries is the predicate on SOH.OnlineOrderFlag. The two queries can be merged introducing a CASE expression in the aggregate:

SELECT ProductID

,Name

,ISNULL(OnlineOrders,0) AS OnlineOrders

,ISNULL(OfflineOrders,0) AS OfflineOrders

FROM Production.Product AS PR

CROSS APPLY (

SELECT SUM(CASE WHEN SOH.OnlineOrderFlag = 1 THEN 1 ELSE 0 END),

SUM(CASE WHEN SOH.OnlineOrderFlag = 0 THEN 1 ELSE 0 END)

FROM Sales.SalesOrderHeader AS SOH

WHERE EXISTS (

SELECT *

FROM Sales.SalesOrderDetail

WHERE SalesOrderID = SOH.SalesOrderID

AND ProductID = PR.ProductID

)

) AS Orderscount (OnlineOrders, OfflineOrders);

There are infinite possibilities and enumerating them all would be far beyond the scope of this post. This is one of the topics that my students often find hard to understand and I realize that it really takes some experience to identify merge opportunities and implement them.

8. Put it all together

Remember the query outline you wrote previously? It’s time to put it into action.

Some of the identifiers may have gone away in the merge process, some others are still there and have been transformed into different SQL constructs, such as CTEs, iTVFs or temporary tables.

9. Verify the output based on multiple different input values

Now it’s time to see if your new query works exactly like the original one. You already have a script for that: you can go on and use it.

Remember that the test can be considered meaningful only if you repeat it a reasonably large number of times, with different parameters. Some queries could appear to be identical, but still be semantically different. Make sure the rewritten version handles NULLs and out-of-range parameters in the same way.

10.Comment your work thoroughly

If you don’t comment your work, somebody will find it even more difficult to maintain than the elephant you found when you started.

Comments are for free and don’t affect the query performance in any way. Don’t add comments that mimic what the query does, instead, write a meaningful description of the output of the query.

For instance, given a code fragment like this:

SELECT SalesOrderID, OrderDate, ProductID

INTO #orders

FROM Sales.SalesOrderHeader AS H

INNER JOIN Sales.SalesOrderDetail AS D

ON H.SalesOrderID = D.SalesOrderID

WHERE OrderDate BETWEEN @StartDate AND @EndDate

a comment like “joins OrderHeader to OrderDetail” adds nothing to the clarity of the code. A comment like “Selects the orders placed between the @StartDate and @EndDate and saves the results in a temporary table for later use” would be a much better choice.

Elephant eaten. (Burp!)

After all, it was not too big, was it?