Blog Archives

A viable alternative to dynamic SQL in administration scripts

As a DBA, you probably have your toolbox full of scripts, procedures and functions that you use for the day-to-day administration of your instances.

I’m no exception and my hard drive is full of scripts that I tend to accumulate and never throw away, even if I know I will never need (or find?) them again.

However, my preferred way to organize and maintain my administration scripts is a database called “TOOLS”, which contains all the scripts I regularly use.

One of the challenges involved in keeping the scripts in a database rather than in a script file is the inability to choose a database context for the execution. When a statement is encapsulated in a view, function or stored procedure in a database, every reference to a database-specific object is limited to the database that contains the programmable object itself. The only way to overcome this limitation is the use of dynamic sql.

For instance, if I want to query the name of the tables in a database, I can use the following statement:

SELECT name FROM sys.tables

The statement references a catalog view specific to a single database, so if I enclose it in a stored procedure, the table names returned by this query are those found in the database that contains the stored procedure itself:

USE TOOLS; GO CREATE PROCEDURE getTableNames AS SELECT name FROM sys.tables; GO EXEC getTableNames;

This is usually not an issue, since most stored procedures will not cross the boundaries of the database they are created in. Administration scripts are different, because they are meant as a single entry point to maintain the whole SQL server instance.

In order to let the statement work against a different database, you need to choose one of the following solutions:

- dynamic SQL

- sp_executesql

- marking as a system object

- … an alternative way

Each of these techniques has its PROs and its CONs and I will try to describe them in this post.

1. Dynamic SQL

It’s probably the easiest way to solve the issue: you just have to concatenate the database name to the objects names.

USE TOOLS;

GO

ALTER PROCEDURE getTableNames @db_name sysname

AS

BEGIN

DECLARE @sql nvarchar(max)

SET @sql = 'SELECT name FROM '+ QUOTENAME(@db_name) +'.sys.tables';

EXEC(@sql)

END

GO

EXEC getTableNames 'msdb';

PROS:

- very easy to implement for simple statements

CONS:

- can rapidly turn to a nightmare with big, complicated statements, as each object must be concatenated with the database name. Different objects have different ways to be related to the database: tables and views can be concatenated directly, while functions such as OBJECT_NAME accept an additional parameter to specify the database name.

- the statement has to be treated as a string and enclosed in quotes, which means that:

- quotes must be escaped, and escaped quotes must be escaped again and escaped and re-escaped quotes… ok, you know what I mean

- no development aids such as intellisense, just-in-time syntax checks and syntax coloring

2. sp_executesql

It’s a neater way to avoid concatenating the database name to each object referenced in the statement.

USE TOOLS;

GO

ALTER PROCEDURE getTableNames @db_name sysname

AS

BEGIN

-- use a @sql variable to store the whole query

-- without concatenating the database name

DECLARE @sql nvarchar(max);

SET @sql = 'SELECT name FROM sys.tables';

-- concatenate the database name to the

-- sp_executesql call, just once

DECLARE @cmd nvarchar(max);

SET @cmd = 'EXEC '+ QUOTENAME(@db_name) +'.sys.sp_executesql @sql';

EXEC sp_executesql @cmd, N'@sql nvarchar(max)', @sql

END

GO

EXEC getTableNames 'msdb';

PROS:

- the dynamic sql is taken as a whole and does not need to be cluttered with multiple concatenations

CONS:

- needs some more work than a straight concatenation and can be seen as “obscure”

- suffers from the same issues found with plain dynamic sql, because the statement is, again, treated as a string

3. System object

Nice and easy: every stored procedure you create in the master database with the “sp_” prefix can be executed from any database context.

Using the undocumented stored procedure sp_MS_marksystemobject you can also mark the stored procedure as a “system object” and let it reference the tables in the database from which it is invoked.

USE master;

GO

ALTER PROCEDURE sp_getTableNames

AS

BEGIN

SELECT name FROM sys.tables

END

GO

EXEC sys.sp_MS_marksystemobject 'sp_getTableNames'

GO

USE msdb;

GO

EXEC sp_getTableNames;

PROS:

- no need to use dynamic sql

CONS:

- requires creating objects in the “master” database, which is something I tend to avoid

- works with stored procedures only (actually, it works with other objects, such as tables and views, but you have to use the “sp_” prefix. The day I will find a view named “sp_getTableNames” in the master database it won’t be safe to stay near me)

An alternative method:

It would be really helpful if we could store the statement we want to execute inside an object that doesn’t involve dynamic sql and doesn’t need to be stored in the master database. In other words, we need a way to get the best of both worlds.

Is there such a solution? Apparently, there isn’t.

The ideal object to store a statement and reuse it later is a view, but there is no way to “execute” a view against a different database. In fact you don’t execute a view, you just select from it, which is quite a different thing.

What you “execute” when you select from a view is the statement in its definition (not really, but let me simplify).

So, what we would need to do is just read the definition from a view and use the statement against the target database. Sounds straightforward, but it’s not.

The definition of a view also contains the “CREATE VIEW” statement and stripping it off is not just as easy as it seems.

Let’s see the issue with an example: I will create a view to query the last update date of the index statistics in a database, using the query from Glenn Berry’s Diagnostic Queries.

USE TOOLS;

GO

-- When were Statistics last updated on all indexes? (Query 48)

CREATE VIEW statisticsLastUpdate

AS

SELECT

DB_NAME() AS database_name

,o.NAME AS stat_name

,i.NAME AS [Index Name]

,STATS_DATE(i.[object_id], i.index_id) AS [Statistics Date]

,s.auto_created

,s.no_recompute

,s.user_created

,st.row_count

FROM sys.objects AS o WITH (NOLOCK)

INNER JOIN sys.indexes AS i WITH (NOLOCK)

ON o.[object_id] = i.[object_id]

INNER JOIN sys.stats AS s WITH (NOLOCK)

ON i.[object_id] = s.[object_id]

AND i.index_id = s.stats_id

INNER JOIN sys.dm_db_partition_stats AS st WITH (NOLOCK)

ON o.[object_id] = st.[object_id]

AND i.[index_id] = st.[index_id]

WHERE o.[type] = 'U';

I just had to remove ORDER BY and OPTION(RECOMPILE) because query hints cannot be used in views.

Querying the object definition returns the whole definition of the view, not only the SELECT statement:

SELECT OBJECT_DEFINITION(OBJECT_ID('statisticsLastUpdate')) AS definition

definition

-------------------------------------------------------------------

-- When were Statistics last updated on all indexes? (Query 48)

CREATE VIEW statisticsLastUpdate

AS

SELECT

DB_NAME() AS database_name

,o.NAME AS stat_name

,i.NAME AS [Index Name]

,STATS_DATE(i.[object_id], i.index_id) AS [Statistics Date]

,s.auto_created

,s.no_recompute

(1 row(s) affected)

In order to extract the SELECT statement, we would need something able to parse (properly!) the view definition and we all know how complex it can be.

Fortunately, SQL Server ships with an undocumented function used in replication that can help solving the problem: its name is fn_replgetparsedddlcmd.

This function accepts some parameters, lightly documented in the code: fn_replgetparsedddlcmd (@ddlcmd, @FirstToken, @objectType, @dbname, @owner, @objname, @targetobject)

Going back to the example, we can use this function to extract the SELECT statement from the view definition:

SELECT master.sys.fn_replgetparsedddlcmd(

OBJECT_DEFINITION(OBJECT_ID('statisticsLastUpdate'))

,'CREATE'

,'VIEW'

,DB_NAME()

,'dbo'

,'statisticsLastUpdate'

,NULL

) AS statement

statement

---------------------------------------------------------------------

AS

SELECT

DB_NAME() AS database_name

,o.NAME AS stat_name

,i.NAME AS [Index Name]

,STATS_DATE(i.[object_id], i.index_id) AS [Statistics Date]

,s.auto_created

,s.no_recompute

,s.user_created

,st.row_count

(1 row(s) affected)

The text returned by the function still contains the “AS” keyword, but removing it is a no-brainer:

DECLARE @cmd nvarchar(max)

SELECT @cmd = master.sys.fn_replgetparsedddlcmd(

OBJECT_DEFINITION(OBJECT_ID('statisticsLastUpdate'))

,'CREATE'

,'VIEW'

,DB_NAME()

,'dbo'

,'statisticsLastUpdate'

,NULL

)

SELECT @cmd = RIGHT(@cmd, LEN(@cmd) - 2) -- Removes "AS"

SELECT @cmd AS statement

statement

-------------------------------------------------------------------

SELECT

DB_NAME() AS database_name

,o.NAME AS stat_name

,i.NAME AS [Index Name]

,STATS_DATE(i.[object_id], i.index_id) AS [Statistics Date]

,s.auto_created

,s.no_recompute

,s.user_created

,st.row_count

(1 row(s) affected)

Now that we are able to read the SELECT statement from a view’s definition, we can execute that statement against any database we like, or even against all the databases in the instance.

-- =============================================

-- Author: Gianluca Sartori - spaghettidba

-- Create date: 2013-04-16

-- Description: Extracts the view definition

-- and runs the statement in the

-- database specified by @db_name

-- If the target database is a pattern,

-- the statement gets executed against

-- all databases matching the pattern.

-- =============================================

CREATE PROCEDURE [dba_execute_view]

@view_name sysname

,@db_name sysname

AS

BEGIN

SET NOCOUNT,

XACT_ABORT,

QUOTED_IDENTIFIER,

ANSI_NULLS,

ANSI_PADDING,

ANSI_WARNINGS,

ARITHABORT,

CONCAT_NULL_YIELDS_NULL ON;

SET NUMERIC_ROUNDABORT OFF;

DECLARE @cmd nvarchar(max)

DECLARE @sql nvarchar(max)

DECLARE @vw_schema sysname

DECLARE @vw_name sysname

IF OBJECT_ID(@view_name) IS NULL

BEGIN

RAISERROR('No suitable object found for name %s',16,1,@view_name)

RETURN

END

IF DB_ID(@db_name) IS NULL

AND @db_name NOT IN ('[USER]','[SYSTEM]')

AND @db_name IS NOT NULL

BEGIN

RAISERROR('No suitable database found for name %s',16,1,@view_name)

RETURN

END

SELECT @vw_schema = OBJECT_SCHEMA_NAME(OBJECT_ID(@view_name)),

@vw_name = OBJECT_NAME(OBJECT_ID(@view_name))

SELECT @cmd = master.sys.fn_replgetparsedddlcmd(

OBJECT_DEFINITION(OBJECT_ID(@view_name))

,'CREATE'

,'VIEW'

,DB_NAME()

,@vw_schema

,@vw_name

,NULL

)

SELECT @cmd = RIGHT(@cmd, LEN(@cmd) - 2) -- Removes "AS"

-- CREATE A TARGET TEMP TABLE

SET @sql = N'

SELECT TOP(0) * INTO #results FROM ' + @view_name + ';

INSERT #results

EXEC [dba_ForEachDB]

@statement = @cmd,

@name_pattern = @db_name;

SELECT * FROM #results;'

EXEC sp_executesql

@sql

,N'@cmd nvarchar(max), @db_name sysname'

,@cmd

,@db_name

END

The procedure depends on dba_ForEachDB, the stored procedure I posted a couple of years ago that replaces the one shipped by Microsoft. If you still prefer their version, you’re free to modify the code as you wish.

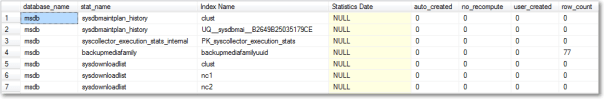

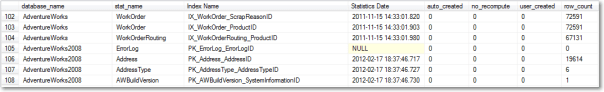

Now that we have a stored procedure that “executes” a view, we can use it to query statistics update information from a different database:

EXEC [dba_execute_view] 'statisticsLastUpdate', 'msdb'

We could also query the same information from all user databases:

EXEC [dba_execute_view] 'statisticsLastUpdate', '[USER]'

That’s it, very easy and straightforward.

Just one suggestion for the SELECT statements in the views: add a DB_NAME() column, in order to understand where the data comes from, or it’s going to be a total mess.

Next steps:

This is just the basic idea, the code can be improved in many ways.

For instance, we could add a parameter to decide whether the results must be piped to a temporary table or not. As you probably know, INSERT…EXEC cannot be nested, so you might want to pipe the results to a table in a different way.

Another thing you might want to add is the ability to order the results according to an additional parameter.

To sum it up, with a little help from Microsoft, we can now safely create a database packed with all our administration stuff and execute the queries against any database in our instance.

Moving system databases to the default data and log paths

Recently I had to assess and tune quite a lot of SQL Server instances and one the things that are often overlooked is the location of the system databases.

I often see instance where the system databases are located in the system drives under the SQL Server default installation path, which is bad for many reasons, especially for tempdb.

I had to move the system databases so many times that I ended up coding a script to automate the process.

The script finds all system databases that are not sitting in the default data and log paths and issues the ALTER DATABASE statements needed to move the files to the default paths.

Obviously, to let the script work, the default data and log paths must have been set in the instance properties:

You may also point out that moving all system databases to the default data and log paths is not always a good idea. And you would be right: for instance, if possible, the tempdb database should be working on a fast dedicated disk. However, very often I find myself dealing with low-end servers where separate data and log disks are a luxury, not to mention a dedicated tempdb disk. If you are concerned about moving tempd to the default data and log paths, you can modify the script accordingly.

-- =============================================

-- Author: Gianluca Sartori - spaghettidba

-- Create date: 2013-03-22

-- Description: Moves the system databases to the

-- default data and log paths and

-- updates SQL Server startup params

-- accordingly.

-- =============================================

SET NOCOUNT ON;

USE master;

-- Find default data and log paths

-- reading from the registry

DECLARE @defaultDataLocation nvarchar(4000)

DECLARE @defaultLogLocation nvarchar(4000)

EXEC master.dbo.xp_instance_regread

N'HKEY_LOCAL_MACHINE',

N'Software\Microsoft\MSSQLServer\MSSQLServer',

N'DefaultData',

@defaultDataLocation OUTPUT

EXEC master.dbo.xp_instance_regread

N'HKEY_LOCAL_MACHINE',

N'Software\Microsoft\MSSQLServer\MSSQLServer',

N'DefaultLog',

@defaultLogLocation OUTPUT

-- Loop through all system databases

-- and move to the default data and log paths

DECLARE @sql nvarchar(max)

DECLARE stmts CURSOR STATIC LOCAL FORWARD_ONLY

FOR

SELECT

' ALTER DATABASE '+ DB_NAME(database_id) +

' MODIFY FILE ( ' +

' NAME = '''+ name +''', ' +

' FILENAME = '''+

CASE type_desc

WHEN 'ROWS' THEN @defaultDataLocation

ELSE @defaultLogLocation

END +

'\'+ RIGHT(physical_name,CHARINDEX('\',REVERSE(physical_name),1)-1) +'''' +

' )'

FROM sys.master_files

WHERE DB_NAME(database_id) IN ('master','model','msdb','tempdb')

AND (

physical_name NOT LIKE @defaultDataLocation + '%'

OR physical_name NOT LIKE @defaultLogLocation + '%'

)

OPEN stmts

FETCH NEXT FROM stmts INTO @sql

WHILE @@FETCH_STATUS = 0

BEGIN

PRINT @sql

EXEC(@sql)

FETCH NEXT FROM stmts INTO @sql

END

CLOSE stmts

DEALLOCATE stmts

-- Update SQL Server startup parameters

-- to reflect the new master data and log

-- files locations

DECLARE @val nvarchar(500)

DECLARE @key nvarchar(100)

DECLARE @regvalues TABLE (

parameter nvarchar(100),

value nvarchar(500)

)

INSERT @regvalues

EXEC master.dbo.xp_instance_regenumvalues

N'HKEY_LOCAL_MACHINE',

N'SOFTWARE\Microsoft\MSSQLServer\MSSQLServer\Parameters'

DECLARE reg CURSOR STATIC LOCAL FORWARD_ONLY

FOR

SELECT *

FROM @regvalues

WHERE value LIKE '-d%'

OR value LIKE '-l%'

OPEN reg

FETCH NEXT FROM reg INTO @key, @val

WHILE @@FETCH_STATUS = 0

BEGIN

IF @val LIKE '-d%'

SET @val = '-d' + (

SELECT physical_name

FROM sys.master_files

WHERE DB_NAME(database_id) = 'master'

AND type_desc = 'ROWS'

)

IF @val LIKE '-l%'

SET @val = '-l' + (

SELECT physical_name

FROM sys.master_files

WHERE DB_NAME(database_id) = 'master'

AND type_desc = 'LOG'

)

EXEC master.dbo.xp_instance_regwrite

N'HKEY_LOCAL_MACHINE',

N'SOFTWARE\Microsoft\MSSQLServer\MSSQLServer\Parameters',

@key,

N'REG_SZ',

@val

FETCH NEXT FROM reg INTO @key, @val

END

CLOSE reg

DEALLOCATE reg

After running this script, you can shut down the SQL Server service and move the data and log files to the appropriate locations.

When the files are ready, you can bring SQL Server back online.

BE CAREFUL! Before running this script against a clustered instance, check what the xp_instance_regread commands return: I have seen cases with SQL Server not reading from the appropriate keys.

Data Collector Clustering Woes

During the last few days I’ve been struggling to work around something that seems to be a bug in SQL Server 2008 R2 Data Collector in a clustered environment. It’s been quite a struggle, so I decided to post my findings and my resolution, hoping I didn’t contend in vain.

SYMPTOMS:

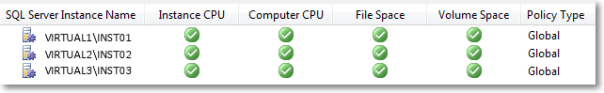

After setting up a Utility Control Point, I started to enroll my instances to the UCP and everything was looking fine.

When an instance is enrolled to a UCP, the sysutility_mdw database can be used as a target Management Datawarehouse for non-utility collection sets, such as the built-in system collection sets. Actually, the utility database is the only possible target for any collection set, since the data collection configuration is shared between utility and non-utility collection sets.

That said, I enabled and started the system collection sets and eagerly waited for some data to show up on the built-in reports. As nothing turned up, I checked the data collector jobs and they were executing successfully, so the data had to be there, hidden somewhere.

In fact, the data had been collected and uploaded successfully, but it didn’t show up in the reports because of the way the data source had been registered in the Management Datawarehouse internal tables.

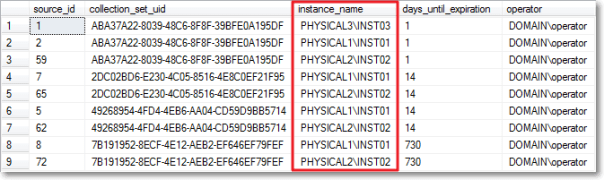

A quick look at [core].[source_info_internal] unveiled the root cause of the issue: the clustered instances had been registered with the physical name of the cluster node and not with the virtual server name of the instance.

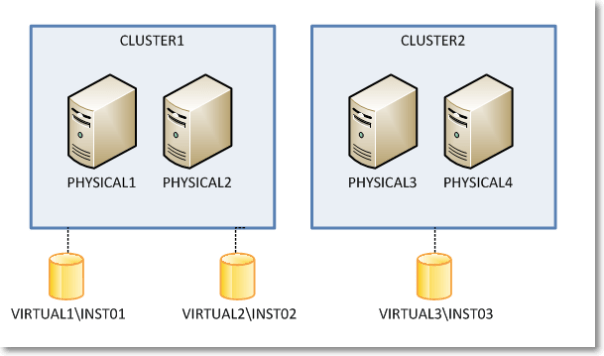

The built-in Data Collector reports filter data in this table using the server name connected in SSMS, which is obviously very different from what found in the data sources table. For instance, when connected to the clustered instance VIRTUAL1\INST01, I didn’t see any performance data showing in the report because the data had been collected using the physical network name of the owner node (eg. PHYSICAL1\INST01).

I know it may sound confusing at this point, so keep the following picture in mind while looking at servers and instances in the example.

So, what was wrong with my setup? How could it be fixed?

I tried everything that came to my mind to no avail. In order, I tried:

- Wiping out the datawarehouse database (sysutility_mdw)

- Reinstalling the instance hosting the datawarehouse

- Changing the upload jobs (this one looked promising, because some job steps contained the $(MACH)\$(INST) tokens instead of the $(SRVR) token I would have expected, but it didn’t work either)

- Updating data in the tables directly

Nothing I tried solved the issue: every time the upload jobs ran at the enrolled instances, the wrong instance names turned up in the data sources table.

I suspect something was wrong in the Management Datawarehouse instance, since the same issue affected all the enrolled instances, no matter where they were installed. Question is I was unable to find a way to make it work.

The only thing that worked for me was forcing SQL Server to understand what my cluster setup looks like and preventing it from using cluster node names instead of virtual instance names.

As ugly as it can be, the only fix that worked was a view + INSTEAD OF TRIGGER combination.

First of all we need some tables to store the cluster layout, with nodes and instances.

-- Create a couple of tables in msdb to

-- describe the cluster topology

USE msdb;

GO

CREATE TABLE sysutility_ucp_managed_cluster_nodes (

cluster_id int NOT NULL,

node_name sysname NOT NULL PRIMARY KEY CLUSTERED

)

GO

CREATE TABLE sysutility_ucp_managed_cluster_servers (

cluster_id int NOT NULL,

virtual_server_name sysname NOT NULL PRIMARY KEY CLUSTERED,

instance_name sysname NULL,

server_name AS virtual_server_name + ISNULL('\' + NULLIF(instance_name,'MSSQLSERVER'),'')

)

GO

INSERT INTO sysutility_ucp_managed_cluster_nodes (cluster_id, node_name)

VALUES (1,'PHYSICAL1'),

(1,'PHYSICAL2'),

(2,'PHYSICAL3'),

(2,'PHYSICAL4')

GO

INSERT INTO sysutility_ucp_managed_cluster_servers (cluster_id, virtual_server_name, instance_name)

VALUES (1,'VIRTUAL1','INST01'),

(1,'VIRTUAL2','INST02'),

(2,'VIRTUAL3','INST03')

GO

GRANT SELECT ON object::sysutility_ucp_managed_cluster_nodes TO [dc_proxy];

GRANT SELECT ON object::sysutility_ucp_managed_cluster_servers TO [dc_proxy];

Then we need to update the data sources collected using the cluster node name instead of the virtual name:

USE [sysutility_mdw]

GO

UPDATE trg

SET trg.instance_name = REPLACE(trg.instance_name COLLATE database_default, nodes.node_name + '\', srv.virtual_server_name + '\')

FROM [core].[source_info_internal] AS trg

INNER JOIN msdb.dbo.sysutility_ucp_managed_cluster_nodes AS nodes

ON nodes.node_name = SUBSTRING(trg.instance_name, 1, LEN(nodes.node_name)) COLLATE database_default

INNER JOIN msdb.dbo.sysutility_ucp_managed_cluster_servers AS srv

ON nodes.cluster_id = srv.cluster_id

-- Now server names should be ok

SELECT *

FROM sysutility_mdw.[core].[source_info_internal]

GO

Now we will replace the data sources table with a view that multiplies virtual server names for each possible owner node. This is required because the collection sets keep trying to upload data using the cluster node name and they fail miserably when the data source is not found in the table (“the specified collection set is not valid in this data warehouse”)

USE [sysutility_mdw]

GO

-- Rename the data sources table

EXEC sp_rename 'core.source_info_internal', 'source_info_internal_ms'

USE [sysutility_mdw]

GO

SET ANSI_NULLS ON

GO

SET QUOTED_IDENTIFIER ON

GO

CREATE VIEW [core].[source_info_internal]

AS

SELECT sii.source_id

,sii.collection_set_uid

,instance_name = (nds.node_name + ISNULL('\' + NULLIF(srv.instance_name,'MSSQLSERVER'),'')) COLLATE Latin1_General_CI_AI

,sii.days_until_expiration

,sii.operator

FROM core.source_info_internal_ms AS sii

INNER JOIN msdb.dbo.sysutility_ucp_managed_cluster_servers AS srv

ON sii.instance_name COLLATE database_default = srv.server_name

INNER JOIN msdb.dbo.sysutility_ucp_managed_cluster_nodes AS nds

ON nds.cluster_id = srv.cluster_id

UNION ALL

SELECT *

FROM core.source_info_internal_ms

GO

And now the last thing we need to create is a trigger on the view, in order to control what gets written to the original table.

With this in place, we should have only “good” server names showing up in the instance_name column.

CREATE TRIGGER [core].[TR_source_info_internal_IU]

ON [core].[source_info_internal]

INSTEAD OF INSERT, UPDATE, DELETE

AS

BEGIN

SET NOCOUNT ON;

-- Update rows using the source_id

UPDATE trg

SET days_until_expiration = i.days_until_expiration

FROM core.source_info_internal_ms AS trg

INNER JOIN inserted AS i

ON trg.source_id = i.source_id

WHERE EXISTS (

SELECT 1

FROM deleted

WHERE source_id = i.source_id

)

-- Turn INSERTs into UPDATEs using the

-- cluster physical / virtual conversion

UPDATE trg

SET days_until_expiration = i.days_until_expiration

FROM core.source_info_internal_ms AS trg

INNER JOIN msdb.dbo.sysutility_ucp_managed_cluster_servers AS srv

ON srv.server_name = trg.instance_name COLLATE database_default

INNER JOIN msdb.dbo.sysutility_ucp_managed_cluster_nodes AS nodes

ON nodes.cluster_id = srv.cluster_id

INNER JOIN inserted AS i

ON trg.collection_set_uid = i.collection_set_uid

AND trg.operator = i.operator

AND nodes.node_name + ISNULL('\' + NULLIF(srv.instance_name,'MSSQLSERVER'),'') = i.instance_name COLLATE database_default

WHERE NOT EXISTS (

SELECT 1

FROM deleted

WHERE source_id = i.source_id

)

-- Handle proper INSERTs

;WITH newrows AS (

SELECT collection_set_uid, v_server.instance_name, days_until_expiration, operator

FROM inserted AS i

CROSS APPLY (

SELECT instance_name = COALESCE((

SELECT srv.server_name

FROM msdb.dbo.sysutility_ucp_managed_cluster_servers AS srv

INNER JOIN msdb.dbo.sysutility_ucp_managed_cluster_nodes AS nodes

ON nodes.cluster_id = srv.cluster_id

WHERE srv.server_name = i.instance_name COLLATE database_default

),(

SELECT srv.server_name

FROM msdb.dbo.sysutility_ucp_managed_cluster_servers AS srv

INNER JOIN msdb.dbo.sysutility_ucp_managed_cluster_nodes AS nodes

ON nodes.cluster_id = srv.cluster_id

WHERE nodes.node_name + ISNULL('\' + NULLIF(srv.instance_name,'MSSQLSERVER'),'') = i.instance_name COLLATE database_default

), (

SELECT i.instance_name COLLATE database_default

)

) -- end coalesce

) AS v_server

WHERE NOT EXISTS (

SELECT 1

FROM deleted

WHERE source_id = i.source_id

)

)

INSERT INTO core.source_info_internal_ms (collection_set_uid, instance_name, days_until_expiration, operator)

SELECT collection_set_uid, instance_name, days_until_expiration, operator

FROM newrows

WHERE NOT EXISTS (

SELECT 1

FROM core.source_info_internal_ms

WHERE collection_set_uid = newrows.collection_set_uid

AND instance_name = newrows.instance_name

AND operator = newrows.operator

)

DELETE trg

FROM core.source_info_internal_ms AS trg

WHERE EXISTS (

SELECT 1

FROM deleted

WHERE source_id = trg.source_id

)

AND NOT EXISTS (

SELECT 1

FROM inserted

WHERE source_id = trg.source_id

)

END

Obviously I don’t have access to the source code of the Data Collector, but I suspect it uses a component which is not cluster-aware (dcexec.exe) and for some reason it ends up using the wrong IP address to communicate with the management datawarehouse. I have nothing to support my supposition: it only seems reasonable to me and it resembles something I experienced in the past with non-cluster-aware services, such as the SQL Browser.

Is this a bug? Definitely!

Should I report it on Connect? Maybe: the results with past items are so discouraging that I don’t think I’ll bother taking the time to file it. It must be said that reproducing this error is not easy: any other failover cluster instance I have laying around was not affected by this issue, so I guess it’s an edge case. Nevertheless, worth fixing.

UPDATE 15 sept 2016:

Turns out that the whole problem arises ONLY when a proxy account runs the data collection job steps. If the job steps are run impersonating the SQL Server Agent account, the error doesn’t turn up. I suggest this solution rather than the complicated view/trigger solution proposed here.

dba_runCHECKDB v2(012)

If you are one among the many that downloaded my consistency check stored procedure called “dba_RunCHECKDB”, you may have noticed a “small” glitch… it doesn’t work on SQL Server 2012!

This is due to the resultset definition of DBCC CHECKDB, which has changed again in SQL Server 2012. Trying to pipe the results of that command in the table definition for SQL Server 2008 produces a column mismatch and it obviously fails.

Fixing the code is very easy indeed, but I could never find the time to post the corrected version until today.

Also, I had to discover the new table definition for DBCC CHECKDB, and it was not just as easy as it used to be in SQL Server 2008. In fact, a couple of days ago I posted a way to discover the new resultset definition working around the cumbersome metadata discovery feature introduced in SQL Server 2012.

Basically, the new output of DBCC CHECKDB now includes 6 new columns:

CREATE TABLE ##DBCC_OUTPUT(

Error int NULL,

[Level] int NULL,

State int NULL,

MessageText nvarchar(2048) NULL,

RepairLevel nvarchar(22) NULL,

Status int NULL,

DbId int NULL, -- was smallint in SQL2005

DbFragId int NULL, -- new in SQL2012

ObjectId int NULL,

IndexId int NULL,

PartitionId bigint NULL,

AllocUnitId bigint NULL,

RidDbId smallint NULL, -- new in SQL2012

RidPruId smallint NULL, -- new in SQL2012

[File] smallint NULL,

Page int NULL,

Slot int NULL,

RefDbId smallint NULL, -- new in SQL2012

RefPruId smallint NULL, -- new in SQL2012

RefFile smallint NULL, -- new in SQL2012

RefPage int NULL,

RefSlot int NULL,

Allocation smallint NULL

)

If you Google the name of one of these new columns, you will probably find a lot of blog posts (no official documentation, unfortunately) that describes the new output of DBCC CHECKDB, but none of them is strictly correct: all of them indicate the smallint columns as int.

Not a big deal, actually, but still incorrect.

I will refrain from posting the whole procedure here: I updated the code in the original post, that you can find clicking here. You can also download the code from the Code Repository.

As usual, suggestions and comments are welcome.

Discovering resultset definition of DBCC commands in SQL Server 2012

Back in 2011 I showed a method to discover the resultset definition of DBCC undocumented commands.

At the time, SQL Server 2012 had not been released yet and nothing suggested that the linked server trick could stop working on the new major version. Surprisingly enough it did.

If you try to run the same code showed in that old post on a 2012 instance, you will get a quite explicit error message:

DECLARE @srv nvarchar(4000);

SET @srv = @@SERVERNAME; -- gather this server name

-- Create the linked server

EXEC master.dbo.sp_addlinkedserver

@server = N'LOOPBACK',

@srvproduct = N'SQLServ', -- it’s not a typo: it can’t be “SQLServer”

@provider = N'SQLNCLI', -- change to SQLOLEDB for SQLServer 2000

@datasrc = @srv;

-- Set the authentication to "current security context"

EXEC master.dbo.sp_addlinkedsrvlogin

@rmtsrvname = N'LOOPBACK',

@useself = N'True',

@locallogin = NULL,

@rmtuser = NULL,

@rmtpassword = NULL;

USE tempdb;

GO

CREATE PROCEDURE loginfo

AS

BEGIN

SET NOCOUNT ON;

DBCC LOGINFO();

END

GO

SELECT *

INTO tempdb.dbo.loginfo_output

FROM OPENQUERY(LOOPBACK, 'SET FMTONLY OFF; EXEC tempdb.dbo.loginfo');

DROP PROCEDURE loginfo;

GO

Msg 11528, Level 16, State 1, Procedure sp_describe_first_result_set, Line 1 The metadata could not be determined because statement 'DBCC LOGINFO();' in procedure 'loginfo' does not support metadata discovery.

This behaviour has to do with the way SQL Server 2012 tries to discover metadata at parse/bind time, when the resultset is not available yet for DBCC commands.

Fortunately, there is still a way to discover metadata when you have a SQL Server instance of a previous version available.

On my laptop I have a 2008R2 instance I can use to query the 2012 instance with a linked server:

-- run this on the 2008R2 instance

USE [master]

GO

EXEC master.dbo.sp_addlinkedserver

@server = N'LOCALHOST\SQL2012'

,@srvproduct = N'SQL Server'

EXEC master.dbo.sp_addlinkedsrvlogin

@rmtsrvname = N'LOCALHOST\SQL2012'

,@useself = N'True'

,@locallogin = NULL

,@rmtuser = NULL

,@rmtpassword = NULL

GO

SELECT *

INTO tempdb.dbo.loginfo_output

FROM OPENQUERY([LOCALHOST\SQL2012], 'SET FMTONLY OFF; EXEC tempdb.dbo.loginfo');

GO

This code pipes the results of the DBCC command into a table in the tempdb database in my 2008R2 instance. The table can now be scripted using SSMS:

Using the 2008R2 instance as a “Trojan Horse” for the metadata discovery, you can see that the resultset definition of DBCC LOGINFO() has changed again in SQL Server 2012:

CREATE TABLE [dbo].[loginfo_output]( [RecoveryUnitId] [int] NULL, -- new in SQL2012 [FileId] [int] NULL, [FileSize] [bigint] NULL, [StartOffset] [bigint] NULL, [FSeqNo] [int] NULL, [Status] [int] NULL, [Parity] [tinyint] NULL, [CreateLSN] [numeric](25, 0) NULL )

This trick will be particularly useful for an upcoming (and long overdue) post, so… stay tuned!

More on converting Trace Files

Yesterday I posted a method to convert trace files from SQL Server 2012 to SQL Server 2008R2 using a trace table.

As already mentioned in that post, having to load the whole file into a trace table has many shortcomings:

- The trace file can be huge and loading it into a trace table could take forever

- The trace data will consume even more space when loaded into a SQL Server table

- The table has to be written back to disk in order to obtain the converted file

- You need to have Profiler 2008 in order to write a trace in the “old” format

- You need to have a SQL Server 2008 instance to dump the trace table back to the file, as Profiler 2008 won’t connect to a SQL Server 2012

That said, it would really be useful if the file could be updated in place, changing just the version byte…

No worries: PowerShell to the rescue!

## ============================================= ## Author: Gianluca Sartori - @spaghettidba ## Create date: 2012-11-07 ## Description: Changes the version information ## in the header of a SQL Server trace ## ============================================= cls # Enter your filename here $fileName = "somefile.trc" # The version information we want to write: 0x0A = 10 = SQLServer 2008 [Byte[]] $versionData = 0x0A # The offset of the version information in the file $offset = 390 [System.IO.FileMode] $open = [System.IO.FileMode]::OpenOrCreate $stream = New-Object System.IO.FileStream -ArgumentList $fileName, $open $stream.Seek($offset, [System.IO.SeekOrigin]::Begin); $stream.Write($versionData, 0, $versionData.Length); $stream.Close()

This script overwrites the version byte in the trace file, which lies at offset 390, changing it from 11 to 10.

This way, even a huge 100 GB trace file can be converted in milliseconds, ready to be consumed by ReadTrace or Profiler.

Enjoy!

P.S.: I haven’t tried, but I guess it could work with previous versions. However, tracing is deprecated in SQL Server 2012 and I guess it doesn’t introduce many differences from the SQL Server 2008 trace file format. The same cannot be said for SQL Server 2005 and I suppose that the file wouldn’t open anyway in Profiler. Unfortunately, I don’t have a SQL Server 2005 around to test.

Convert a Trace File from SQLServer 2012 to SQLServer 2008R2

Recently I started using RML utilities quite a lot.

ReadTrace and Ostress are awesome tools for benchmarking and baselining and many of the features found there have not been fully implemented in SQLServer 2012, though Distributed Replay was a nice addition.

However, as you may have noticed, ReadTrace is just unable to read trace files from SQLServer 2012, so you may get stuck with a trace file you wont’ abe able to process.

When I first hit this issue, I immediately thought I could use a trace table to store the data and then use Profiler again to write back to a trace file.

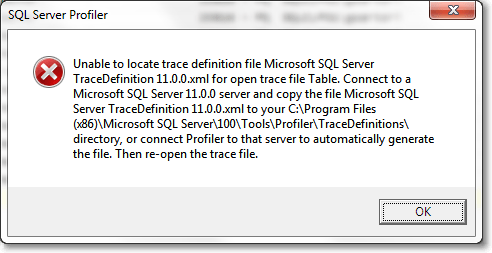

The idea wasn’t bad, but turns out that Profiler 2012 will always write trace files in the new format, with no way to specify the old one. On the other hand, Profiler2008R2 can’t read trace data from a table written by Profiler2012, throwing an ugly exception:

Interesting! So, looks like Profiler stores version information and other metadata somewhere in the trace table, but where exactly?

It might sound funny, but I had to trace Profiler with Profiler in order to know! Looking at the profiler trace, the first thing that Profiler does when trying to open a trace table is this:

declare @p1 int set @p1=180150003 declare @p3 int set @p3=2 declare @p4 int set @p4=4 declare @p5 int set @p5=-1 exec sp_cursoropen @p1 output,N'select BinaryData from [dbo].[trace_test] where RowNumber=0',@p3 output,@p4 output,@p5 output select @p1, @p3, @p4, @p5

So, looks like Profiler stores its metadata in the first row (RowNumber = 0), in binary format.

That was the clue I was looking for!

I loaded a trace file in the old format into another trace table and I started to compare the data to find similarities and differences.

I decided to break the binary headers into Dwords and paste the results in WinMerge to hunt the differences:

-- Break the header row in the trace table into DWords -- in order to compare easily in WinMerge SELECT SUBSTRING(data, 8 * (n - 1) + 3, 8) AS dword ,n AS dwordnum FROM ( SELECT CONVERT(VARCHAR(max), CAST(binarydata AS VARBINARY(max)), 1) AS data FROM tracetable WHERE rownumber = 0 ) AS src INNER JOIN ( SELECT DISTINCT ROW_NUMBER() OVER ( ORDER BY (SELECT NULL) ) / 8 AS n FROM sys.all_columns AS ac ) AS v ON n > 0 AND (n - 1) * 8 <= LEN(data) - 3 ORDER BY 2

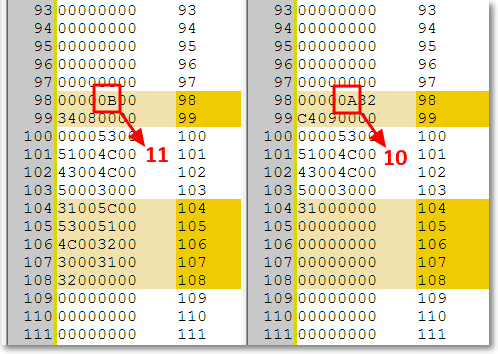

If you copy/paste the output in WinMerge you can easily spot the difference around the 100th dword:

Hmmmm, seems promising. Can those “11” and “10” possibly represent the trace version? Let’s try and see.

Now we should just update that section of the header to let the magic happen:

-- Use a table variable to cast the trace -- header from image to varbinary(max) DECLARE @header TABLE ( header varbinary(max) ) -- insert the trace header into the table INSERT INTO @header SELECT binarydata FROM tracetable WHERE RowNumber = 0 -- update the byte at offset 390 with version 10 (SQLServer 2008) -- instead of version 11 (SQLServer 2012) UPDATE @header SET header .WRITE(0x0A,390,1) -- write the header back to the trace table UPDATE tracetable SET binarydata = (SELECT header FROM @header) WHERE RowNumber = 0

The trace table can now be opened with Profiler2008R2 and written back to a trace file. Hooray!

Yes, I know, using a trace table can take a whole lot of time and consume a lot of disk space when the file is huge (and typically RML traces are), but this is the best I could come up with.

I tried to look into the trace file itself, but I could not find a way to diff the binary contents in an editor. You may be smarter than me a give it a try: in that case, please, post a comment here.

Using this trick, ReadTrace can happily process the trace file and let you perform your benchmarks, at least until Microsoft decides to update RML Utilities to 2012.

UPDATE 11/08/2012: The use of a trace table is not necessary: the trace file can be updated in place, using the script found here.

Formatting dates in T-SQL

First of all, let me say it: I don’t think this should ever be done on the database side. Formatting dates is a task that belongs to the application side and procedural languages are already featured with lots of functions to deal with dates and regional formats.

However, since the question keeps coming up on the forums at SQLServerCentral, I decided to code a simple scalar UDF to format dates.

/*

* AUTHOR: Gianluca Sartori @spaghettidba

* Returns a data formatted according to the format String.

* The format string can contain the following tokens in any order:

*

* yy --> Year, two digits

* YYYY --> Year, four digits

* MM --> Month, two digits

* m --> Month, one digit

* DD --> Day, two digits

* d --> Day, one digit

* HH --> Hour, two digits

* h --> Hour, one digit

* NN --> Minute, two digits

* n --> Minute, one digit

* SS --> Second, two digits

* s --> Second, one digit

* AP --> AM/PM

*

* Any character not in the token list gets concatenated

* to the string and left untouched.

*

* EXAMPLE:

* SELECT dbo.formatDate(GETDATE(), 'YYYY-MM-DD hh:nn:ss')

* OUTPUT: 2007-01-25 17:35:21

*

* SELECT dbo.formatDate(GETDATE(), 'DD-MM-YYYY')

* OUTPUT: 25-01-2007

*/

CREATE FUNCTION [dbo].[formatDate](@date as datetime, @format_string as varchar(50) )

RETURNS varchar(50)

AS

BEGIN

DECLARE @format varchar(50)

DECLARE @result AS varchar(50)

DECLARE @iter AS int

DECLARE @prevchar AS char(1)

DECLARE @currchar AS char(1)

DECLARE @currtoken AS varchar(4)

SET @iter = 1

SET @result = ''

SET @format = CONVERT(varchar(50),@format_string) COLLATE Latin1_General_CS_AS

WHILE @iter <= LEN(@format)

BEGIN

SET @currchar = CONVERT(char(1),SUBSTRING(@format,@iter,1)) COLLATE Latin1_General_CS_AS

IF @currchar <> @prevchar OR @iter = LEN(@format)

BEGIN

SET @currtoken =

CASE (@prevchar) COLLATE Latin1_General_CS_AS -- Use a case-sensitive collation

WHEN 'Y' THEN RIGHT('0000' + CAST(YEAR(@date) AS varchar(4)),4)

WHEN 'y' THEN RIGHT('00' + CAST(YEAR(@date) AS varchar(4)),2)

WHEN 'M' THEN RIGHT('00' + CAST(MONTH(@date) AS varchar(2)),2)

WHEN 'm' THEN CAST(MONTH(@date) AS varchar(2))

WHEN 'D' THEN RIGHT('00' + CAST(DAY(@date) AS varchar(2)),2)

WHEN 'd' THEN CAST(DAY(@date) AS varchar(2))

WHEN 'H' THEN RIGHT('00' + CAST(DATEPART(hour,@date) AS varchar(2)),2)

WHEN 'h' THEN CAST(DATEPART(hour,@date) AS varchar(2))

WHEN 'N' THEN RIGHT('00' + CAST(DATEPART(minute,@date) AS varchar(2)),2)

WHEN 'n' THEN CAST(DATEPART(minute,@date) AS varchar(2))

WHEN 'S' THEN RIGHT('00' + CAST(DATEPART(second,@date) AS varchar(2)),2)

WHEN 's' THEN CAST(DATEPART(second,@date) AS varchar(2))

WHEN 'A' THEN CASE WHEN DATEPART(hour,@date) >= 12 THEN 'PM' ELSE 'AM' END

WHEN ' ' THEN ' '

ELSE RTRIM(@prevchar)

END

SET @result = @result + @currtoken

END

SET @prevchar = @currchar COLLATE Latin1_General_CS_AS

SET @iter = @iter + 1

END

RETURN @result

END

Let’s see this function in action:

SELECT dbo.formatDate(GETDATE(), 'YYYY-MM-d h:NN:SS AP') -- RETURNS: 2011-10-5 18:07:09 PM SELECT dbo.formatDate(GETDATE(), 'YYYY-MM-DD HH:NN:SS') -- RETURNS: 2011-10-05 18:07:09

The code is simple and (I hope) clear enough. It’s not intended to be the best way to format dates in T-SQL and, honestly, I hope it contains some nasty hidden bug, because you shouldn’t be using this code at all!

For more information on custom date formats in SQLServer, see this post: SQL Server and custom date formats