Blog Archives

Non-unique indexes that COULD be unique

In my last post I showed a query to identify non-unique indexes that should be unique.

You maybe have some other indexes that could be unique based on the data they contain, but are not.

To find out, you just need to query each of those indexes and group by the whole key, filtering out those that have duplicate values. It may look like an overwhelming amount of work, but the good news is I have a script for that:

DECLARE @sql nvarchar(max);

WITH indexes AS (

SELECT

QUOTENAME(OBJECT_SCHEMA_NAME(uq.object_id)) AS [schema_name]

,QUOTENAME(OBJECT_NAME(uq.object_id)) AS table_name

,uq.name AS index_name

,cols.name AS cols

FROM sys.indexes AS uq

CROSS APPLY (

SELECT STUFF((

SELECT ',' + QUOTENAME(sc.name) AS [text()]

FROM sys.index_columns AS uc

INNER JOIN sys.columns AS sc

ON uc.column_id = sc.column_id

AND uc.object_id = sc.object_id

WHERE uc.object_id = uq.object_id

AND uc.index_id = uq.index_id

AND uc.is_included_column = 0

FOR XML PATH('')

),1,1,SPACE(0))

) AS cols (name)

WHERE is_unique = 0

AND has_filter = 0

AND is_hypothetical = 0

AND type IN (1,2)

AND object_id IN (

SELECT object_id

FROM sys.objects

WHERE is_ms_shipped = 0

AND type = 'U'

)

)

-- Build a big statement to query index data

SELECT @sql = (

SELECT

'SELECT ''' + [schema_name] + ''' AS [schema_name],

''' + table_name + ''' AS table_name,

''' + index_name + ''' AS index_name,

can_be_unique =

CASE WHEN (

SELECT COUNT(*)

FROM (

SELECT ' + cols + ',COUNT(*) AS cnt

FROM ' + [schema_name] + '.' + [table_name] + '

GROUP BY ' + cols + '

HAVING COUNT(*) > 1

) AS data

) > 0

THEN 0

ELSE 1

END;'

FROM indexes

FOR XML PATH(''), TYPE

).value('.','nvarchar(max)');

-- prepare a table to receive results

DECLARE @results TABLE (

[schema_name] sysname,

[table_name] sysname,

[index_name] sysname,

[can_be_unique] bit

)

-- execute the script and pipe the results

INSERT @results

EXEC(@sql)

-- show candidate unique indexes

SELECT *

FROM @results

WHERE can_be_unique = 1

ORDER BY [schema_name], [table_name], [index_name]

The script should complete quite quickly, since you have convenient indexes in place. However, I suggest that you run it against a non production copy of your database, as it will scan all non unique indexes found in the database.

The results will include all the indexes that don’t contain duplicate data. Whether you should make those indexes UNIQUE, only you can tell.

Some indexes may contain unique data unintentionally, but could definitely store duplicate data in the future. If you know your data domain, you will be able to spot the difference.

Non-unique indexes that should be unique

Defining the appropriate primary key and unique constraints is fundamental for a good database design.

One thing that I often see overlooked is that all the indexes with a key that includes completely another UNIQUE index’s key should in turn be created as UNIQUE. You could argue that such an index has probably been created by mistake, but it’s not always the case.

If you want to check your database for indexes that can be safely made UNIQUE, you can use the following script:

SELECT OBJECT_SCHEMA_NAME(uq.object_id) AS [schema_name],

OBJECT_NAME(uq.object_id) AS table_name,

uq.name AS unique_index_name,

nui.name AS non_unique_index_name

FROM sys.indexes AS uq

CROSS APPLY (

SELECT name, object_id, index_id

FROM sys.indexes AS nui

WHERE nui.object_id = uq.object_id

AND nui.index_id <> uq.index_id

AND nui.is_unique = 0

AND nui.has_filter = 0

AND nui.is_hypothetical = 0

) AS nui

WHERE is_unique = 1

AND has_filter = 0

AND is_hypothetical = 0

AND uq.object_id IN (

SELECT object_id

FROM sys.tables

)

AND NOT EXISTS (

SELECT column_id

FROM sys.index_columns AS uc

WHERE uc.object_id = uq.object_id

AND uc.index_id = uq.index_id

AND uc.is_included_column = 0

EXCEPT

SELECT column_id

FROM sys.index_columns AS nuic

WHERE nuic.object_id = nui.object_id

AND nuic.index_id = nui.index_id

AND nuic.is_included_column = 0

)

ORDER BY [schema_name], table_name, unique_index_name

You may wonder why you should bother making those indexes UNIQUE.

The answer is that constraints help the optimizer building better execution plans. Marking an index as UNIQUE tells the optimizer that one and just one row can be found for each key value: it’s a valuable information that can actually help estimating the correct cardinality.

Does the script return any rows? Make those indexes UNIQUE, you’ll thank me later.

SQL Server Agent in Express Edition

As you probably know, SQL Server Express doesn’t ship with SQL Server Agent.

This is a known limitation and many people offered alternative solutions to schedule jobs, including windows scheduler, free and commercial third-party applications.

My favourite SQL Server Agent replacement to date is Denny Cherry‘s Standalone SQL Agent, for two reasons:

- It uses msdb tables to read job information.

This means that jobs, schedules and the like can be scripted using the same script you would use in the other editions. - It’s open source and it was started by a person I highly respect.

However, while I still find it a great piece of software, there are a couple of downsides to take into account:

- It’s still a beta version and the project hasn’t been very active lately.

- There’s no GUI tool to edit jobs or monitor job progress.

- It fails to install when UAC is turned on

- It’s not 100% compatible with SQL Server 2012

- It doesn’t restart automatically when the SQL Server instance starts

- It requires sysadmin privileges

The UAC problem during installation is easy to solve: open an elevated command prompt and run the installer msi. Easy peasy.

As far as SQL Server 2012 is concerned, the service fails to start when connected to a 2012 instance. In the ERRORLOG file (the one you find in the Standalone SQL Agent directory, not SQL Server’s) you’ll quickly find the reason of the failure: it can’t create the stored procedure sp_help_job_SSA. I don’t know why this happens: I copied the definition of the stored procedure from a 2008 instance and it worked fine.

If you don’t have a SQL Server 2008 instance available, you can extract the definition of the stored procedure from the source code at CodePlex.

Issue 5) is a bit more tricky to tackle. When the service loses the connection to the target SQL Server instance, it won’t restart automatically and it will remain idle until you cycle the service manually. In the ERRORLOG file you’ll find a message that resembles to this:

Error connecting to SQL Instance. No connection attempt will be made until Sevice is restarted.

You can overcome this limitation using a startup stored procedure that restarts the service:

USE master

GO

EXEC sp_configure 'advanced',1

RECONFIGURE WITH OVERRIDE

EXEC sp_configure 'xp_cmdshell',1

RECONFIGURE WITH OVERRIDE

GO

USE master

GO

CREATE PROCEDURE startStandaloneSQLAgent

AS

BEGIN

SET NOCOUNT ON;

EXEC xp_cmdshell 'net stop "Standalone SQL Agent"'

EXEC xp_cmdshell 'net start "Standalone SQL Agent"'

END

GO

EXEC sp_procoption @ProcName = 'startStandaloneSQLAgent'

, @OptionName = 'startup'

, @OptionValue = 'on';

GO

However, you’ll probably notice that the SQL Server service account does not have sufficient rights to restart the service.

The following PowerShell script grants the SQL Server service account all the rights it needs. In order to run it, you need to download the code available at Rohn Edwards’ blog.

# Change to the display name of your SQL Server Express service

$service = Get-WmiObject win32_service |

where-object { $_.DisplayName -eq "SQL Server (SQLEXPRESS2008R2)" }

$serviceLogonAccount = $service.StartName

$ServiceAcl = Get-ServiceAcl "Standalone SQL Agent"

$ServiceAcl.Access

# Add an ACE allowing the service user Start and Stop service rights:

$ServiceAcl.AddAccessRule((New-AccessControlEntry -ServiceRights "Start,Stop" -Principal $serviceLogonAccount))

# Apply the modified ACL object to the service:

$ServiceAcl | Set-ServiceAcl

# Confirm the ACE was saved:

Get-ServiceAcl "Standalone SQL Agent" | select -ExpandProperty Access

After running this script from an elevated Powershell instance, you can test whether the startup stored procedure has enough privileges by invoking it manually.

If everything works as expected, you can restart the SQL Server Express instance and the Standalone SQL Agent service will restart as well.

In conclusion, Standalone SQL Agent is a good replacement for SQL Server Agent in Express edition and, while it suffers from some limitations, I still believe it’s the best option available.

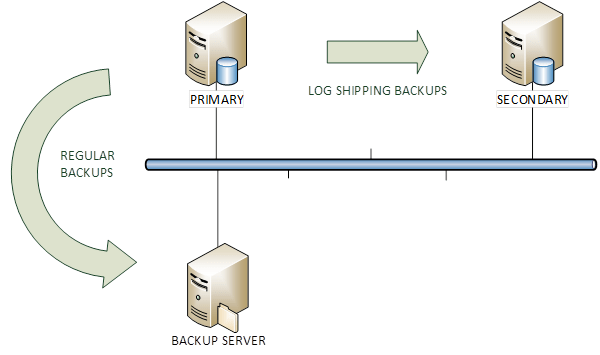

COPY_ONLY backups and Log Shipping

Last week I was in the process of migrating a couple of SQL Server instances from 2008 R2 to 2012.

In order to let the migration complete quickly, I set up log shipping from the old instance to the new instance. Obviously, the existing backup jobs had to be disabled, otherwise they would have broken the log chain.

That got me thinking: was there a way to keep both “regular” transaction log backups (taken by the backup tool) and the transaction log backups taken by log shipping?

The first thing that came to my mind was the COPY_ONLY option available since SQL Server 2005.

You probably know that COPY_ONLY backups are useful when you have to take a backup for a special purpose, for instance when you have to restore from production to test. With the COPY_ONLY option, database backups don’t break the differential base and transaction log backups don’t break the log chain.

My initial thought was that I could ship COPY_ONLY backups to the secondary and keep taking scheduled transaction log backups with the existing backup tools.

I was dead wrong.

Let’s see it with an example on a TEST database.

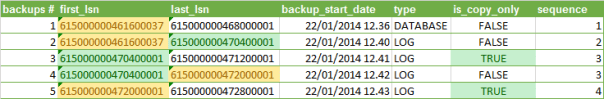

I took 5 backups:

- FULL database backup, to initialize the log chain. Please note that COPY_ONLY backups cannot be used to initialize the log chain.

- LOG backup

- LOG backup with the COPY_ONLY option

- LOG backup

- LOG backup with the COPY_ONLY option

The backup information can be queried from backupset in msdb:

SELECT

ROW_NUMBER() OVER(ORDER BY bs.backup_start_date) AS [backup #]

,first_lsn

,last_lsn

,backup_start_date

,type

,is_copy_only

,DENSE_RANK() OVER(ORDER BY type, bs.first_lsn) AS sequence

FROM msdb.dbo.backupset bs

WHERE bs.database_name = 'TEST'

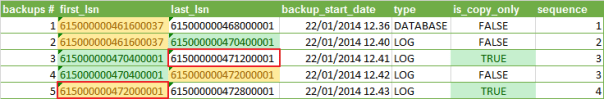

As you can see, the COPY_ONLY backups don’t truncate the transaction log and losing one of those backups wouldn’t break the log chain.

However, all backups always start from the first available LSN, which means that scheduled log backups taken without the COPY_ONLY option truncate the transaction log and make significant portions of the transaction log unavailable in the next COPY_ONLY backup.

You can see it clearly in the following picture: the LSNs highlighted in red should contain no gaps in order to be restored successfully to the secondary, but the regular TLOG backups break the log chain in the COPY_ONLY backups.

That means that there’s little or no point in taking COPY_ONLY transaction log backups, as “regular” backups will always determine gaps in the log chain.

When log shipping is used, the secondary server is the only backup you can have, unless you keep the TLOG backups or use your backup tool directly to ship the logs.

Why on earth should one take a COPY_ONLY TLOG backup (more than one at least) is beyond my comprehension, but that’s a whole different story.

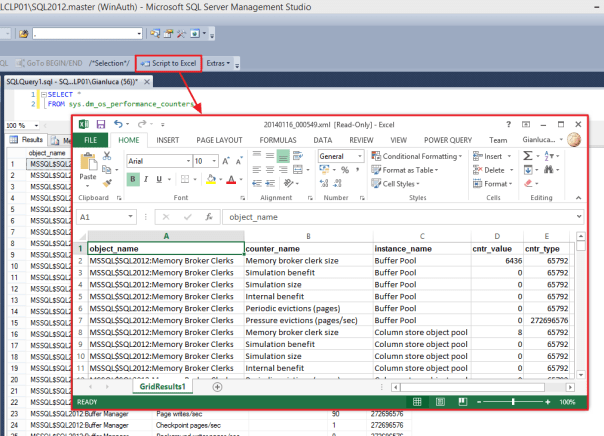

Open SSMS Query Results in Excel with a Single Click

The problem

One of the tasks that I often have to complete is manipulate some data in Excel, starting from the query results in SSMS.

Excel is a very convenient tool for one-off reports, quick data manipulation, simple charts.

Unfortunately, SSMS doesn’t ship with a tool to export grid results to Excel quickly.

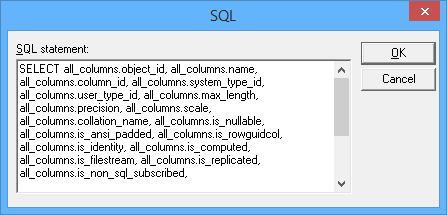

Excel offers some ways to import data from SQL queries, but none of those offers the rich query tools available in SSMS. A representative example is Microsoft Query: how am I supposed to edit a query in a text editor like this?

Enough said.

Actually, there are many ways to export data from SQL Server to Excel, including SSIS packages and the Import/Export wizard. Again, all those methods require writing your queries in a separate tool, often with very limited editing capabilities.

PowerQuery offers great support for data exploration, but it is a totally different beast and I don’t see it as an alternative to running SQL queries directly.

The solution

How can I edit my queries taking advantage of the query editing features of SSMS, review the results and then format the data directly in Excel?

The answer is SSMS cannot do that, but, fortunately, the good guys at Solutions Crew brought you a great tool that can do that and much more.

SSMSBoost is a free add-in that empowers SSMS with many useful features, among which exporting to Excel is just one. I highly suggest that you check out the feature list, because it’s really impressive.

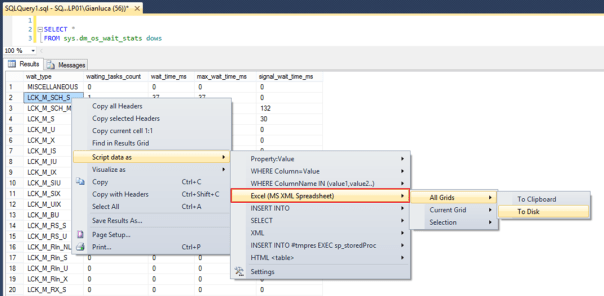

Once SSMSBoost is installed, every time you right click a results grid, a context menu appears that lets you export the grid data to several formats.

No surprises, one of those formats is indeed Excel.

The feature works great, even with relatively big result sets. However, it requires 5 clicks to create the Spreadsheet file and one more click to open it in Excel:

So, where is the single click I promised in the title of this post?

The good news is that SSMSBoost can be automated combining commands in macros to accomplish complex tasks.

Here’s how to create a one-click “open in Excel” command:

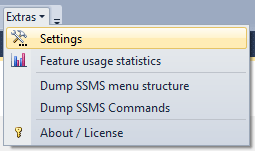

First, open the SSMSBoost settings window clicking the “Extras” button.

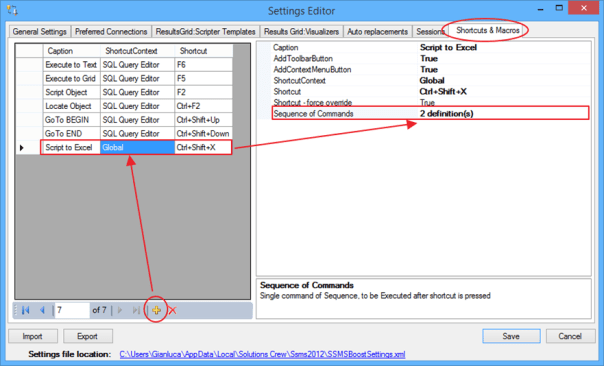

In the “Shortcuts & Macros” tab you can edit and add macros to the toolbar or the context menu and even assign a keyboard shortcut.

Clicking the “definitions” field opens the macro editor

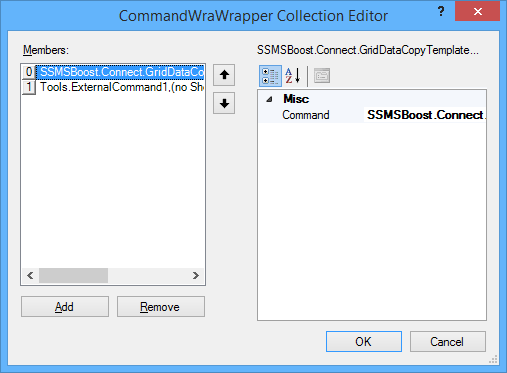

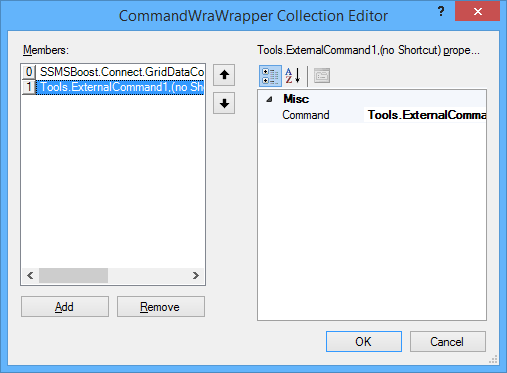

Select “Add” and choose the following command: “SSMSBoost.Connect.GridDataCopyTemplateAllGridsDisk3”. This command corresponds to the “Script all grids as Excel to disk” command in SSMSBoost.

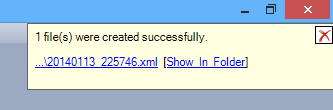

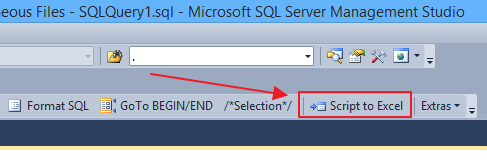

Now save everything with OK and close. You will notice a new button in your toolbar:

That button allows to export all grids to Excel in a single click.

You’re almost there: now you just need something to open the Excel file automatically, without the need for additional clicks.

To accomplish this task, you can use a Powershell script, bound to a custom External Tool.

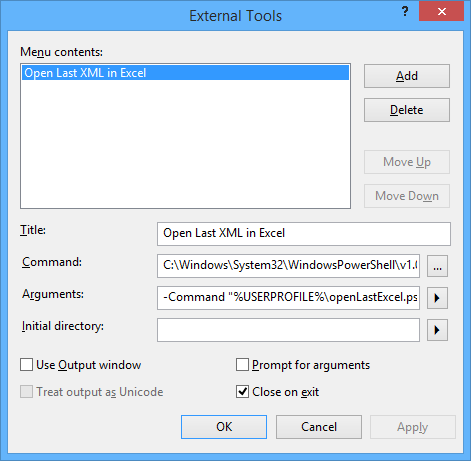

Open the External Tools editor (Tools, External Tools), click “Add” and type these parameters:

Title: Open last XML in Excel

Command: C:\Windows\System32\WindowsPowerShell\v1.0\powershell.exe

Arguments: -File %USERPROFILE%\openLastExcel.ps1 -ExecutionPolicy Bypass

Click OK to close the External Tools editor.

This command lets you open the last XML file created in the SSMSBoost output directory, using a small Powershell script that you have to create in your %USERPROFILE% directory.

The script looks like this:

## =============================================

## Author: Gianluca Sartori - @spaghettidba

## Create date: 2014-01-15

## Description: Open the last XML file in the SSMSBoost

## output dicrectory with Excel

## =============================================

sl $env:UserProfile

# This is the SSMSBoost 2012 settings file

# If you have the 2008 version, change this path

# Sorry, I could not find a registry key to automate it.

$settingsFile = "$env:UserProfile\AppData\Local\Solutions Crew\Ssms2012\SSMSBoostSettings.xml"

# Open the settings file to look up the export directory

$xmldata=[xml](get-content $settingsFile)

$xlsTemplate = $xmldata.SSMSBoostSettings.GridDataCopyTemplates.ChildNodes |

Where-Object { $_.Name -eq "Excel (MS XML Spreadsheet)" }

$SSMSBoostPath = [System.IO.Path]::GetDirectoryName($xlsTemplate.SavePath)

$SSMSBoostPath = [System.Environment]::ExpandEnvironmentVariables($SSMSBoostPath)

# we filter out files created before (now -1 second)

$startTime = (get-date).addSeconds(-1);

$targetFile = $null;

while($targetFile -eq $null){

$targetFile = Get-ChildItem -Path $SSMSBoostPath |

Where-Object { $_.extension -eq '.xml' -and $_.lastWriteTime -gt $startTime } |

Sort-Object -Property LastWriteTime |

Select-Object -Last 1;

# file not found? Wait SSMSBoost to finish exporting

if($targetFile -eq $null) {

Start-Sleep -Milliseconds 100

}

};

$fileToOpen = $targetFile.FullName

# enclose the output file path in quotes if needed

if($fileToOpen -like "* *"){

$fileToOpen = "`"" + $fileToOpen + "`""

}

# open the file in Excel

# ShellExecute is much safer than messing with COM objects...

$sh = new-object -com 'Shell.Application'

$sh.ShellExecute('excel', "/r " + $fileToOpen, '', 'open', 1)

Now you just have to go back to the SSMSBoost settings window and edit the macro you created above.

In the definitions field click … to edit the macro and add a second step. The Command to select is “Tools.ExternalCommand1”.

Save and close everything and now your nice toolbar button will be able to open the export file in Excel automagically. Yay!

Troubleshooting

If nothing happens, you might need to change your Powershell Execution Policy. Remember that SSMS is a 32-bit application and you have to set the Execution Policy for the x86 version of Powershell.

Starting Powershell x86 is not easy in Windows 8/8.1, The documentation says to look up “Windows Powershell (x86)” in the start menu, but I could not find it.

The easiest way I have found is through another External Tool in SSMS. Start SSMS as an Administrator (otherwise the UAC will prevent you from changing the Execution Policy) and configure an external tool to run Powershell. Once you’re in, type “Set-ExecutionPolicy Remotesigned” and hit return. The external tool in your macro will now run without issues.

Bottom line

Nothing compares to SSMS when it comes down to writing queries, but Excel is the best place to format and manipulate data.

Now you have a method to take advantage of the best of both worlds. And it only takes one single click.

Enjoy.

Copy user databases to a different server with PowerShell

Sometimes you have to copy all user databases from a source server to a destination server.

Copying from development to test could be one reason, but I’m sure there are others.

Since the question came up on the forums at SQLServerCentral, I decided to modify a script I published some months ago to accomplish this task.

Here is the code:

## =============================================

## Author: Gianluca Sartori - @spaghettidba

## Create date: 2013-10-07

## Description: Copy user databases to a destination

## server

## =============================================

cls

sl "c:\"

$ErrorActionPreference = "Stop"

# Input your parameters here

$source = "SourceServer\Instance"

$sourceServerUNC = "SourceServer"

$destination = "DestServer\Instance"

# Shared folder on the destination server

# For instance "\\DestServer\D$"

$sharedFolder = "\\DestServer\sharedfolder"

# Path to the shared folder on the destination server

# For instance "D:"

$remoteSharedFolder = "PathOfSharedFolderOnDestServer"

$ts = Get-Date -Format yyyyMMdd

#

# Read default backup path of the source from the registry

#

$SQL_BackupDirectory = @"

EXEC master.dbo.xp_instance_regread

N'HKEY_LOCAL_MACHINE',

N'Software\Microsoft\MSSQLServer\MSSQLServer',

N'BackupDirectory'

"@

$info = Invoke-sqlcmd -Query $SQL_BackupDirectory -ServerInstance $source

$BackupDirectory = $info.Data

#

# Read master database files location

#

$SQL_Defaultpaths = "

SELECT *

FROM (

SELECT type_desc,

SUBSTRING(physical_name,1,LEN(physical_name) - CHARINDEX('\', REVERSE(physical_name)) + 1) AS physical_name

FROM master.sys.database_files

) AS src

PIVOT( MIN(physical_name) FOR type_desc IN ([ROWS],[LOG])) AS pvt

"

$info = Invoke-sqlcmd -Query $SQL_Defaultpaths -ServerInstance $destination

$DefaultData = $info.ROWS

$DefaultLog = $info.LOG

#

# Process all user databases

#

$SQL_FullRecoveryDatabases = @"

SELECT name

FROM master.sys.databases

WHERE name NOT IN ('master', 'model', 'tempdb', 'msdb', 'distribution')

"@

$info = Invoke-sqlcmd -Query $SQL_FullRecoveryDatabases -ServerInstance $source

$info | ForEach-Object {

try {

$DatabaseName = $_.Name

Write-Output "Processing database $DatabaseName"

$BackupFile = $DatabaseName + "_" + $ts + ".bak"

$BackupPath = $BackupDirectory + "\" + $BackupFile

$RemoteBackupPath = $remoteSharedFolder + "\" + $BackupFile

$SQL_BackupDatabase = "BACKUP DATABASE $DatabaseName TO DISK='$BackupPath' WITH INIT, COPY_ONLY, COMPRESSION;"

#

# Backup database to local path

#

Invoke-Sqlcmd -Query $SQL_BackupDatabase -ServerInstance $source -QueryTimeout 65535

Write-Output "Database backed up to $BackupPath"

$BackupPath = $BackupPath

$BackupFile = [System.IO.Path]::GetFileName($BackupPath)

$SQL_RestoreDatabase = "

RESTORE DATABASE $DatabaseName

FROM DISK='$RemoteBackupPath'

WITH RECOVERY, REPLACE,

"

$SQL_RestoreFilelistOnly = "

RESTORE FILELISTONLY

FROM DISK='$RemoteBackupPath';

"

#

# Move the backup to the destination

#

$remotesourcefile = $BackupPath.Substring(1, 2)

$remotesourcefile = $BackupPath.Replace($remotesourcefile, $remotesourcefile.replace(":","$"))

$remotesourcefile = "\\" + $sourceServerUNC + "\" + $remotesourcefile

Write-Output "Moving $remotesourcefile to $sharedFolder"

Move-Item $remotesourcefile $sharedFolder -Force

#

# Restore the backup on the destination

#

$i = 0

Invoke-Sqlcmd -Query $SQL_RestoreFilelistOnly -ServerInstance $destination -QueryTimeout 65535 | ForEach-Object {

$currentRow = $_

$physicalName = [System.IO.Path]::GetFileName($CurrentRow.PhysicalName)

if($CurrentRow.Type -eq "D") {

$newName = $DefaultData + $physicalName

}

else {

$newName = $DefaultLog + $physicalName

}

if($i -gt 0) {$SQL_RestoreDatabase += ","}

$SQL_RestoreDatabase += " MOVE '$($CurrentRow.LogicalName)' TO '$NewName'"

$i += 1

}

Write-Output "invoking restore command: $SQL_RestoreDatabase"

Invoke-Sqlcmd -Query $SQL_RestoreDatabase -ServerInstance $destination -QueryTimeout 65535

Write-Output "Restored database from $RemoteBackupPath"

#

# Delete the backup file

#

Write-Output "Deleting $($sharedFolder + "\" + $BackupFile) "

Remove-Item $($sharedFolder + "\" + $BackupFile) -ErrorAction SilentlyContinue

}

catch {

Write-Error $_

}

}

It’s a quick’n’dirty script, I’m sure there might be something to fix here and there. Just drop a comment if you find something.

Ten features you had in Profiler that are missing in Extended Events

Oooooops!

I exchanged some emails about my post with Jonathan Kehayias and looks like I was wrong on many of the points I made.

I don’t want to keep misleading information around and I definitely need to fix my wrong assumptions.

Unfortunately, I don’t have the time to correct it immediately and I’m afraid it will have to remain like this for a while.

Sorry for the inconvenience, I promise I will try to fix it in the next few days.

Error upgrading MDW from 2008R2 to 2012

Some months ago I posted a method to overcome some quirks in the MDW database in a clustered environment.

Today I tried to upgrade that clustered instance (in a test environment, fortunately) and I got some really annoying errors.

Actually, I got what I deserved for messing with the system databases and I wouldn’t even dare posting my experience if it wasn’t cause by something I suggested on this blog.

However, every cloud has a silver lining: many things can go wrong when upgrading a cluster and the resolution I will describe here can fit many failed upgrade situations.

So, what’s wrong with the solution I proposed back in march?

The offending line in that code is the following:

EXEC sp_rename 'core.source_info_internal', 'source_info_internal_ms'

What happened here? Basically, I renamed a table in the MDW and I created a view in its place.

One of the steps of the setup process tries to upgrade the MDW database with a script, that is executed at the first startup on an upgraded cluster node.

The script fails and the following message is found in the ERRORLOG:

Creating table [core].[source_info_internal]... 2013-10-24 09:21:02.99 spid8s Error: 2714, Severity: 16, State: 6. 2013-10-24 09:21:02.99 spid8s There is already an object named 'source_info_internal' in the database. 2013-10-24 09:21:02.99 spid8s Error: 912, Severity: 21, State: 2. 2013-10-24 09:21:02.99 spid8s Script level upgrade for database 'master' failed because upgrade step 'upgrade_ucp_cmdw.sql' encountered error 3602, state 51, severity 25. This is a serious error condition which might interfere with regular operation and the database will be taken offline. If the error happened during upgrade of the 'master' database, it will prevent the entire SQL Server instance from starting. Examine the previous errorlog entries for errors, take the appropriate corrective actions and re-start the database so that the script upgrade steps run to completion. 2013-10-24 09:21:02.99 spid8s Error: 3417, Severity: 21, State: 3. 2013-10-24 09:21:02.99 spid8s Cannot recover the master database. SQL Server is unable to run. Restore master from a full backup, repair it, or rebuild it. For more information about how to rebuild the master database, see SQL Server Books Online.

Every attempt to bring the SQL Server resource online results in a similar error message.

The only way to fix the error is to restore the initial state of the MDW database, renaming the table core.source_info_internal_ms to its original name.

But how can it be done, since the instance refuses to start?

Microsoft added and documented trace flag 902, that can be used to bypass the upgrade scripts at instance startup.

Remember that the startup parameters of a clustered instance cannot be modified while the resource is offline, because the registry checkpointing mechanism will restore the registry values stored in the quorum disk while bringing the resource online.

There are three ways to start the instance in this situation:

- modify the startup parameters by disabling checkpointing

- modify the startup parameters in the quorum registry hives

- start the instance manually at the command prompt

Method N.3 is the simplest one in this situation and is what I ended up doing.

Once the instance started, I renamed the view core.source_info_internal and renamed core.source_info_internal_ms to its original name.

The instance could then be stopped (CTRL+C at the command prompt or SHUTDOWN WITH NOWAIT in sqlcmd) and restarted removing the trace flag.

With the MDW in its correct state, the upgrade scripts completed without errors and the clustered instance could be upgraded to SQL Server 2012 without issues.

Lessons learned:

- Never, ever mess with the system databases. The MDW is not technically a system database, but it’s shipped by Microsoft and should be kept untouched. If you decide you absolutely need to modify something there, remember to undo your changes before upgrading and applying service packs.

- Always test your environment before upgrading. It took me 1 hour to fix the issue and not every upgrade scenario tolerates 1 hour of downtime. Think about it.

- Test your upgrade.

- Did I mention you need to test?

Jokes aside, I caught my error in a test environment and I’m happy it was not in production.

As the saying goes, better safe than sorry.

SQL Server services are gone after upgrading to Windows 8.1

Yesterday I upgraded my laptop to Windows 8.1 and everything seemed to have gone smoothly.

I really like the improvements in Windows 8.1 and I think they’re worth the hassle of an upgrade if you’re still on Windows 8.

As I was saying, everything seemed to upgrade smoothly. Unfortunately, today I found out that SQL Server services were gone.

My configuration manager looked like this:

My laptop had an instance of SQL Server 2012 SP1 Developer Edition and the windows upgrade process had deleted all SQL Server services but SQL Server Browser.

I thought that a repair would fix the issue, so I took out my SQL Server iso and ran the setup.

Unfortunately, during the repair process, something went wrong and it complained multiple times about “no mappings between Security IDs and account names” or something similar.

Anyway, the setup completed and the services were back in place, but were totally misconfigured.

SQL Server agent had start mode “disabled” and the service account had been changed to “localsystem” (go figure…)

After changing start mode and service accounts, everything were back to normal.

I hope this post helps others that are facing the same issue.

Check SQL Server logins with weak password

SQL Server logins can implement the same password policies found in Active Directory to make sure that strong passwords are being used.

Unfortunately, especially for servers upgraded from previous versions, the password policies are often disabled and some logins have very weak passwords.

In particular, some logins could have the password set as equal to the login name, which would by one of the first things I would try to hack a server.

Are you sure none of your logins has such a poor password?

PowerShell to the rescue!

try {

if((Get-PSSnapin -Name SQlServerCmdletSnapin100 -ErrorAction SilentlyContinue) -eq $null){

Add-PSSnapin SQlServerCmdletSnapin100

}

}

catch {

Write-Error "This script requires the SQLServerCmdletSnapIn100 snapin"

exit

}

cls

# Query server names from your Central Management Server

$qry = "

SELECT server_name

FROM msdb.dbo.sysmanagement_shared_registered_servers

"

$servers = Invoke-Sqlcmd -Query $qry -ServerInstance "YourCMSServerGoesHere"

# Extract SQL Server logins

# Why syslogins and not sys.server_principals?

# Believe it or not, I still support a couple of SQL Server 2000

$qry_logins = "

SELECT loginname, sysadmin

FROM syslogins

WHERE isntname = 0

AND loginname NOT LIKE '##%##'

"

$dangerous_logins = @()

$servers | % {

$currentServer = $_.server_name

$logins = Invoke-Sqlcmd -Query $qry_logins -ServerInstance $currentServer

$logins | % {

$currentLogin = $_.loginname

$isSysAdmin = $_.sysadmin

try {

# Attempt logging in with login = password

$one = Invoke-Sqlcmd -Query "SELECT 1" -ServerInstance $currentServer -Username $currentLogin -Password $currentLogin -ErrorAction Stop

# OMG! Login successful

# Add the login to $dangerous_logins

$info = @{}

$info.LoginName = $currentLogin

$info.Sysadmin = $isSysAdmin

$info.ServerName = $currentServer

$loginInfo = New-Object -TypeName PsObject -Property $info

$dangerous_logins += $loginInfo

}

catch {

# If the login attempt fails, don't add the login to $dangerous_logins

}

}

}

#display dangerous logins

$dangerous_logins